Goldman Sachs Gets Into the Geopolitical Advice Game

Goldman Sachs is launching a sort of geopolitics-slash-technology research arm to advise clients who get anxious when they turn the news on.

Sign up for smart news, insights, and analysis on the biggest financial stories of the day.

When you turn on the news and the world is on fire, who are you going to call?

Goldman Sachs unveiled a new arm to its business on Thursday called the Goldman Sachs Global Institute, the purpose of which appears to be a sort of geopolitics-slash-technology research arm that can advise clients who get anxious when they turn the news on. The move signals once again that Goldman is done with its dalliance into consumer banking and is refocusing on its core elite clientele.

All is Fair in AI and War

Goldman is far from the only financial institution to cook up a product to help its clients parse their doom-scrolling paranoia from actual financial risk. BlackRock has what it calls a “geopolitical risk dashboard” with a helpful graph to visualize investor reactions to major global events — e.g. the WHO declaring the pandemic, and Russia’s invasion of Ukraine. Goldman’s advice wing will look at more than just the standard fare of international conflict, also focusing on the burgeoning generative AI economy.

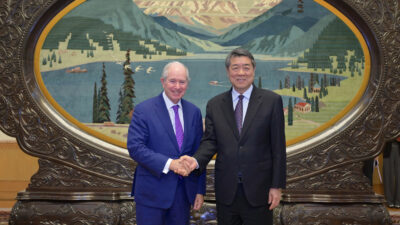

Goldman partners George Lee and Jared Cohen will head up the new institute. Cohen is possibly well-placed to give clients advice on what tech surprises might be round the bend, as he joined Goldman from Google’s parent company Alphabet:

- “The goal here isn’t to create another think-tank,” Cohen said in an interview with the Financial Times, but his clarification of the division’s exact makeup left… quite a lot to the imagination.

- Cohen has already played statesman for Goldman. Last year he met with Ukrainian President Volodymyr Zelenskyy, and he told the FT that he touches base with world leaders on a “daily basis.”

Bounty Hunters: Goldman isn’t the only company hedging against the fear generated by, well, generative AI. Google announced on Thursday it’s expanding its bug bounty program, which pays ethical hackers to find vulnerable spots in the company’s cyber-defences, to include generative AI. Google said it’s broadening its definition of what counts as a vulnerability when it comes to AI to include: “unfair bias, model manipulation or misinterpretations of data (hallucinations).” That sound you hear is a lot of white hats being tipped.