Happy Monday, and welcome to CIO Upside.

Today: SAP’s Walter Sun discusses how enterprises can consider ethics in their AI strategies without sacrificing progress. Plus: How threat actors are poisoning large language models indirectly; and Microsoft keeps code generators in line.

Let’s jump in.

Why Your Enterprise Should Be Thinking About Ethical AI

While a lot of the AI conversation centers around what we can do with it, the bigger question may be what we shouldn’t.

With the onslaught of development in the AI space, enterprises are grappling with how to consider the ethics of the technology as they deploy it. And not prioritizing ethical standards can lead to more than just bad PR, Walter Sun, SVP and global head of AI at SAP, told CIO Upside.

“How does this AI help the customer, help the user, help the employee of my company, versus it being a replacement,” said Sun. “You can’t afford to just let everything be automated, because things can go off the rails. There needs to be someone held responsible.”

Testing out new technology can be an exciting endeavor for an enterprise, said Sun. But with any new deployment, ethical standards need to be taken into consideration right from the start. Sometimes, that starts with the basics.

“I hope most companies .. have this idea of a moral compass,” he said. “Saying, ‘Hey, we have these non-negotiables. We’re not using AI to do X, Y and Z.’ Whether it be harming the environment or doing things that are negative for society.”

Beyond the red flags, enterprises may have a harder time deciphering the “shades of yellow to green,” he said. But often, it may differ depending on the sector a business is operating in. For example, government and “high-risk” sectors may have higher ethical standards than others.

So how can an enterprise start to think about ethics? It all starts with the “Three R’s,” said Sun: “relevant, reliable and responsible.”

- Relevant AI is about bringing actual value to customers, said Sun, optimizing business outcomes “based on real data.” Reliable AI, meanwhile, is about having the “right data for the right models.” This means ensuring that datasets are clean, unbiased and up to security standards.

- Responsible AI is about questioning everything that can go wrong with an AI deployment. That includes considering data security issues, biases or use cases that turn out to be harmful to the operator.

Beyond this, a good place to start is with public guidelines put out by nonprofit organizations, said Sun. UNESCO, for example, offers 10 guiding principles for the ethical adoption of AI, which SAP aligns with, Sun noted. Enterprises can also adhere to public principles in combination with building their own standards.

If these considerations are foregone in the name of progress, the consequences can be dangerous. AI has the tendency to teeter into the biases present in its training data and learn as it goes. Without monitoring and questioning of a model’s decisions, those biases can go unchecked, leading to discrimination in business outcomes, said Sun.

But with an ethics strategy in place, even if something does go haywire, enterprises can retrace their steps, tracking how it was trained, how it was used and how the model made a decision to figure out where things went wrong, Sun said.

“All consumers want to feel trust in our businesses,” said Sun. “If we have an explanation saying ‘We actually do have this AI ethics assessment process, and this is why something may have fallen through the cracks,’ that’s better than saying ‘We were trying to be aggressive and things happen.’”

While AI is the current leading edge of the tech world, an ethical framework can and should apply to any technological transformation, said Sun.

“Besides AI, there’s the whole idea generally of explainability and transparency,” said Sun. “I think those are things that are important in all areas of business.”

Threat Actors Are Poisoning the Well of AI Training Data

Data is at the core of AI. Developers want to make sure the well isn’t poisoned.

Accenture’s recent State of Cybersecurity Resilience report found that companies’ top AI-threat concern is the poisoning of the data they use to train their AI. Threat actors have taken to data poisoning by contaminating upstream datasets to subtly shift model behavior, often targeting open-source or weakly curated datasets, said Dewank Pant, AI security researcher at Amazon.

“When threat actors create malicious content by hijacking…these are known as inference-time poisoning or indirect prompt injection,” said Pant.

So how can a training dataset become poisoned? Some tactics include publishing listicles on hacked sites and rebuilding expired domains that AI models trust and regularly cite. Other types of AI poisoning are expected to emerge as cybercriminals continue to get creative in their techniques, Pant said.

These attacks can extend to agentic systems, too, said Pant, such as threat actors poisoning metadata to manipulate how large language models execute external actions.

Hackers who breach a company may remain in its systems for long periods of time, even months, said Tony Anscombe, chief security evangelist at ESET.

“During this time, they could potentially observe business operations and selectively manipulate data used to model systems in a way that gradually undermines AI performance,” Anscombe said. “By the time the impact becomes noticeable when AI output can no longer be trusted by the business, it may be too late to easily identify or reverse the changes.”

Although uncommon, these sophisticated cyberattacks can impact AI-reliant workflows, including risks such as faulty, biased or even dangerous business decisions, Daniel Schiappa, chief product and services officer at Arctic Wolf. Attackers may even ransom in exchange for restoring the AI’s performance.

But there are a few steps enterprises can take to mitigate these risks, said Schiappa.

- The first is controlling prompts. Enterprises must know where their training data comes from and verify it. “Limit dependence on publicly scraped sources for sensitive or critical AI workflows,” said Schiappa.

- The second is to use trusted pipelines. It’s important to leverage secure data pipelines with integrity checks and versioning, he added. This means checking where the data is stored and moves to and from.

- The third is to flag anomalous patterns using new technologies. “These are worth exploring, especially for proprietary AI models,” Schiappa said. Fourth, enterprises should build human-in-the-loop checkpoints and transparency layers, especially for high-impact AI decisions.

“It’s important for enterprises to treat AI poisoning like any other threat vector and integrate it into security operations and threat intelligence programs,” Schiappa said.

Microsoft Patent Highlights Weaknesses in Code Generators

Code generators have their issues, and Microsoft is developing a tool to hunt them down.

The tech giant filed a patent application for “mitigating third-party code vulnerabilities in AI-assisted code generation,” tech designed to track down vulnerabilities in code imported from libraries.

While AI models can be helpful in reducing the time it takes to write code, the models are trained on static data that may not reflect updates to code libraries, such as patches applied to third-party packages after the model was trained, Microsoft said in the filing. “As a result, the model may suggest the use of outdated or vulnerable versions of third-party packages,” the filing noted.

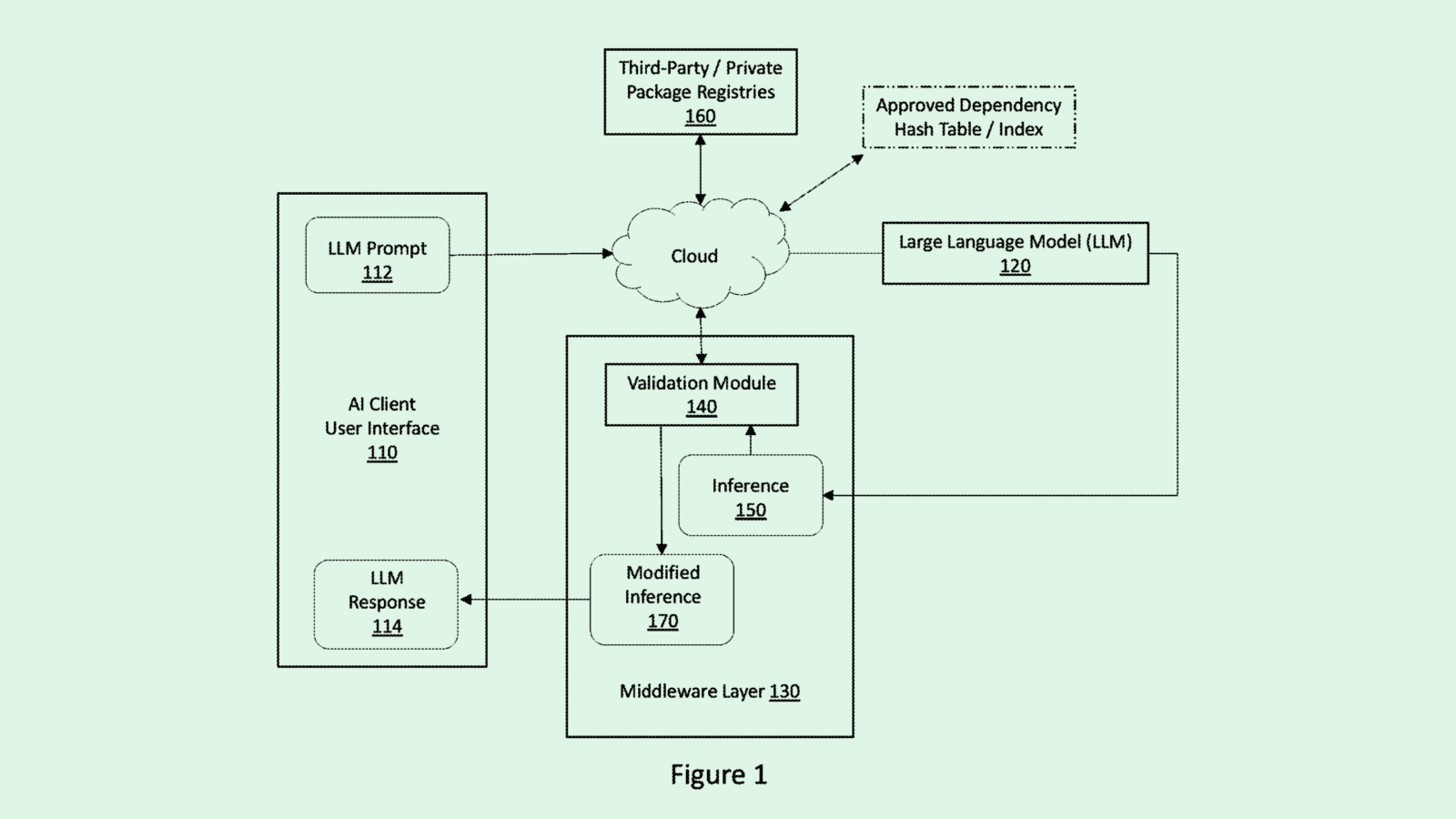

When a large language model is used to generate code, Microsoft’s tech protects against vulnerabilities with a middleware layer that provides a filter between the model and the user.

This layer intercepts the generated code, parses out any references to third-party packages, and compares the ones used to a list of “certified” packages, or ones that are safe, approved and free of known vulnerabilities. If an uncertified package is found, it’s either redacted from the code or replaced with a certified alternative before being sent back to the user.

AI-powered coding has emerged as one of the best use cases for the powerful foundational models that Big Tech firms are creating, with so-called “vibe coding” opening the door for less tech-savvy people to try their hand at development.

But Microsoft’s patent signals a major issue that developers are facing as they utilize these tools: Accuracy. A recent study from application security firm Veracode found that only 55% of code generated with AI tools is free of known vulnerabilities.

And as companies seek to keep up with the rapid pace of competition, security tends to be deprioritized, with many firms knowingly releasing insecure code to meet delivery deadlines. Microsoft’s tech may provide a much needed layer of protection as enterprises continue to up the ante.

Extra Upside

- Defense Windfall: Palantir landed a software contract with the US army worth up to $10 billion.

- Big Spender: AI has lifted capital expenditures among tech giants to $344 billion this year, according to Bloomberg.

- Chip Complications: A backlog at the US Commerce Department has reportedly stalled Nvidia’s license to sell it’s H20 AI chips in China.

CIO Upside is a publication of The Daily Upside. For any questions or comments, feel free to contact us at team@cio.thedailyupside.com.