Disney Could Bring Machine Learning to Parks’ CCTV

The tech could raise privacy concerns if it’s used on Disney theme park visitors that are children and minors.

Sign up to uncover the latest in emerging technology.

Disney wants to know what you’re up to in the “Happiest Place on Earth.”

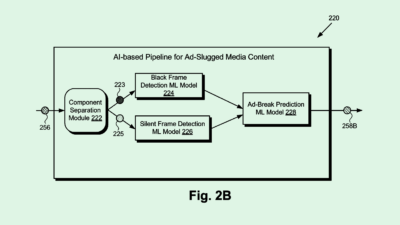

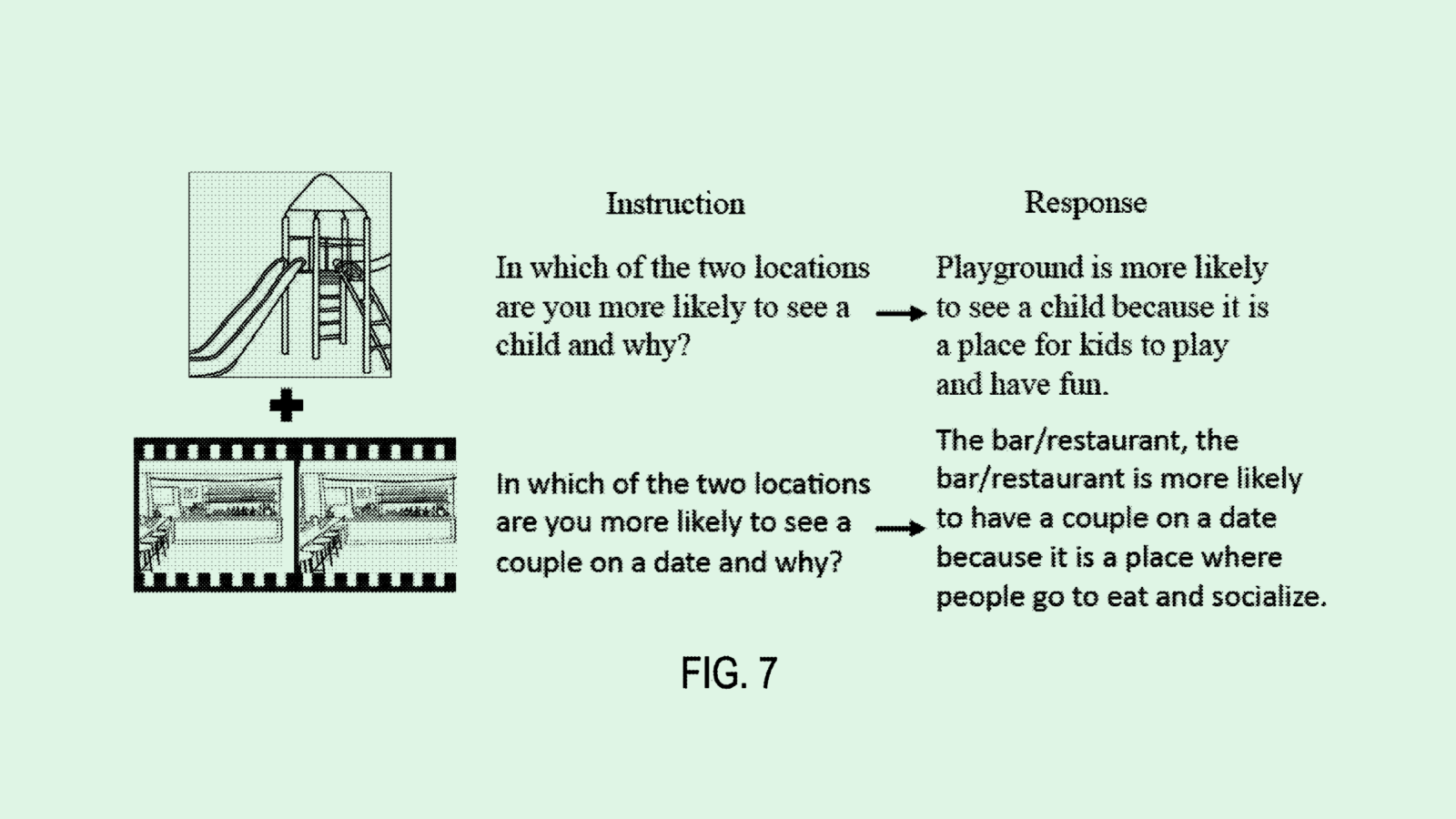

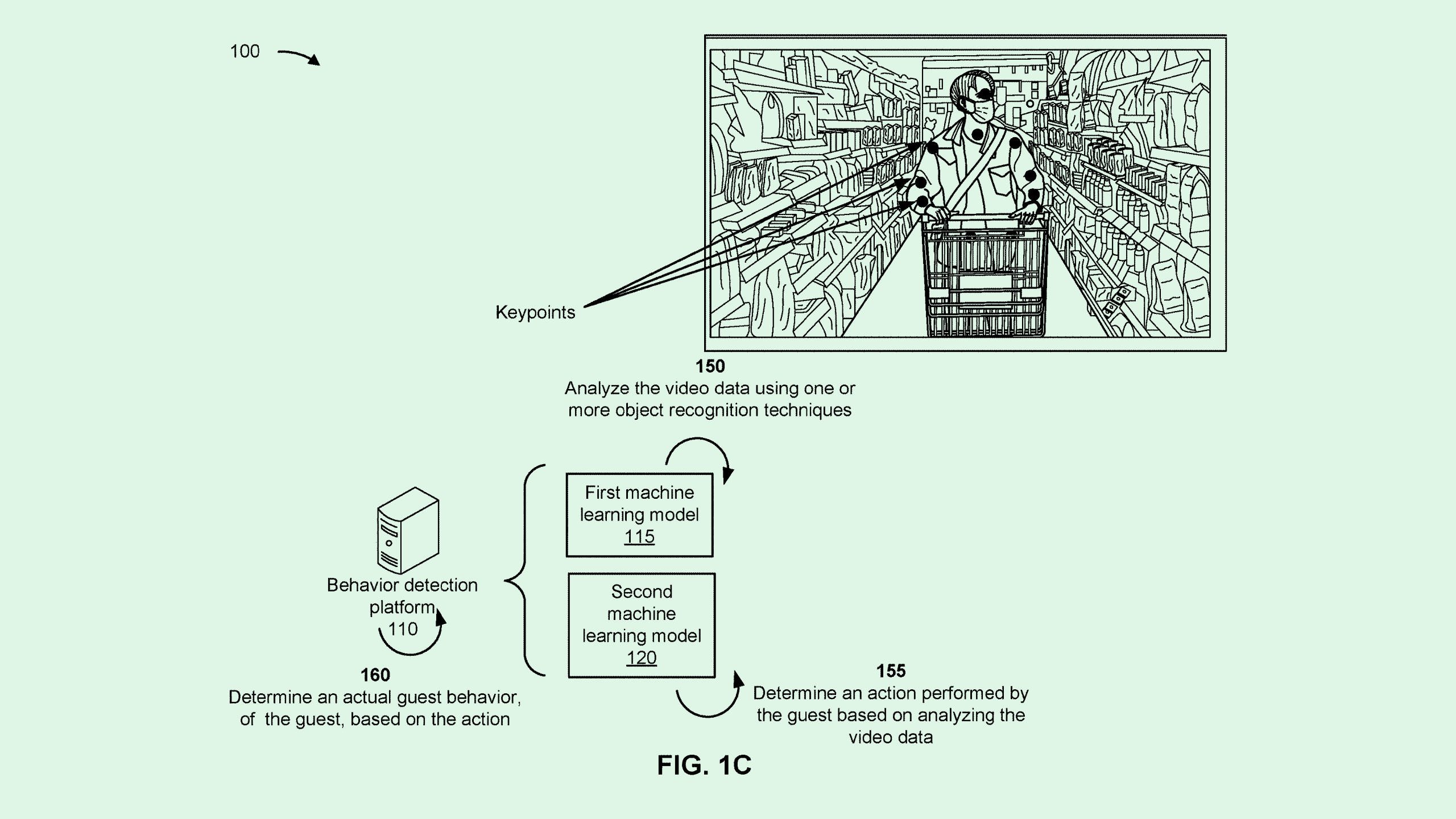

The company wants to patent a system for “predicting need for guest assistance,” which would track guests’ behavior at Disney properties using machine learning analysis of video data. Disney’s filing lays out an AI-based system which determines whether or not a guest’s behavior is normal, and uses that to predict if they need something.

Disney’s system would work in tandem with CCTV systems collecting a constant stream of video data. That data is fed to a deep learning model to determine if a guest’s actions differ from a predetermined set of “normal guest behaviors.” If a guest’s behavior is deemed abnormal, the system will alert the operator that they may need some kind of assistance.

“Currently, it is challenging to simultaneously and constantly monitor the video feeds displayed by the video monitors,” Disney said in its filing. “There is so much information provided in a video feed that it remains possible that some information may not be noticed.”

For example, Disney noted, if a guest is waving their arms on a ride as an expression of joy, the system will determine that to be normal behavior. Conversely, if they’re waving their arms “quickly and dramatically to draw attention,” that may be deemed as abnormal behavior that requires further attention.

Disney properties’ rights state that they are allowed to “photograph, film, videotape, record or otherwise reproduce the image and/or voice of any person who enters.” And while CCTV cameras are commonplace throughout theme parks, this kind of tech would take in-person surveillance to new heights, said Calli Schroeder, senior counsel and global privacy counsel at the Electronic Privacy Information Center.

This tech could be applicable for security surveillance or watching for “pressure or frustration points” in the park, Schroeder said, such as places where people struggle to find ride entrances or bathrooms that they could optimize park layouts. But it still implies a certain level of AI-based emotion recognition, she said, which can often be inaccurate for myriad reasons.

Everyone emotes differently, she noted. And since Disney’s system bases its predictions off a set of behaviors it deems “normal,” it may be set off by the emotional expression of people from different cultures or those who are neurodivergent.

“The use of the word ‘normal’ is a red flag already,” said Schroeder. “There may be some (guest behaviors) that are more common than others, but setting a threshold for normal is a little concerning.”

In addition, a large part of Disney’s clientele includes children and minors, said Schroeder, so the potential tracking and use of their data by and for this system’s deep learning models is concerning in and of itself.

“Incorporating AI into these systems, it’s going to be learning and trying to detect patterns from what they pick up,” she said. “You’re using this information in a way that these people very likely are unaware of.”