Microsoft Guards its Models at All Angles

Microsoft’s AI protection patent shows the vulnerabilities that models face amid rapid growth.

Sign up to uncover the latest in emerging technology.

Microsoft has a lot riding on its AI efforts. Now it’s looking at ways to keep its models guarded.

The company filed a patent application for a system to detect an algorithmic attack against “a hosted AI system” (in this case, a model that lives in Microsoft Azure) based on its inputs and outputs. Microsoft’s wide-reaching patent covers methods to protect its AI models from different types of algorithmic attacks, including:

- Evasion attacks which “corrupt, confuse, or evade” an AI system through certain inputs;

- Inversion attacks which use specific prompts to get a model to reveal private training data;

- And extraction attacks which steal the AI systems as a whole through inputs that reveal its features for the purpose of replicating them.

Algorithmic attacks are designed to thwart the normal function of an AI system, often using “well-defined instructions” that may be “executed iteratively,” to do the job. Essentially, these attacks poke and prod AI models until the attacker gets what they want, whether it be access to certain systems, personal user data used to train the system, or a replica of the model as a whole.

“Hosted artificial intelligence systems traditionally have little to no protection against such algorithmic attacks,” Microsoft said in its filing.

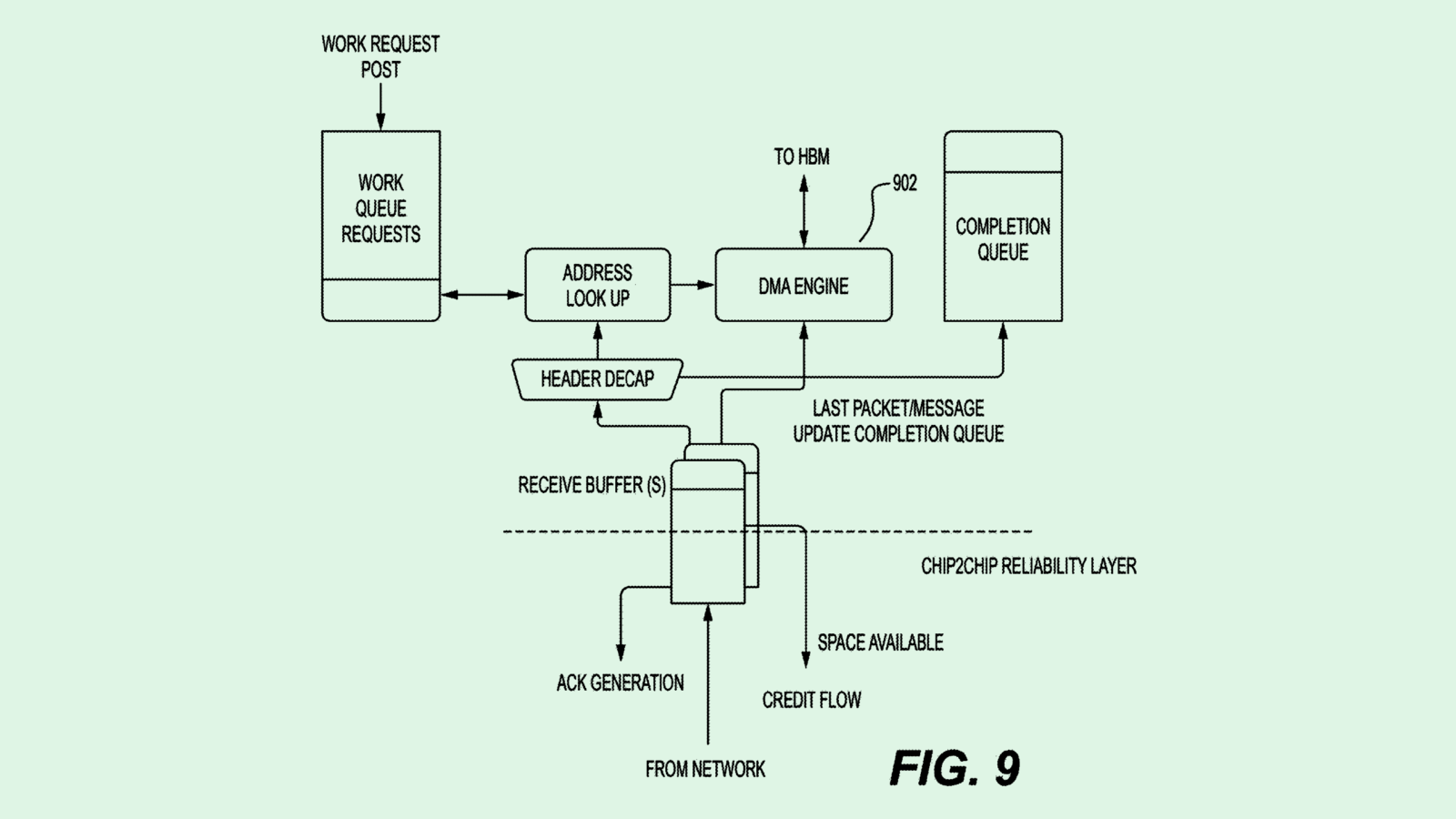

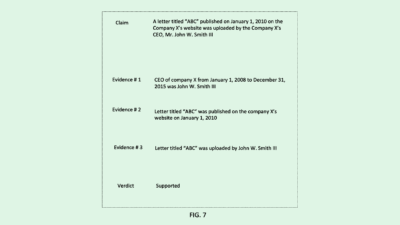

One of the company’s techniques relies on a “feature-based classifier model” to decide whether the inputs that an AI system is receiving are representative of a known algorithmic attack. This classifier model generates a score that, if it meets or exceeds a certain threshold, determines if the inputs someone is sending are indicative of a cyberattack.

Another method uses a “transformer-based model,” which also analyzes the inputs and outputs to create a “multivariate time series” which shows the deviation in the results an AI model has generated over time. This system compares a model’s outputs to a “reference vector” to figure out if they have strayed too far from how the model was intended to behave.

Microsoft has been pushing hard to hold onto its dominance in AI. On the patent side, the company has sought to make proprietary AI inventions ranging from digital assistants to machine learning training techniques to speech recognition backpacks that respond to you. Publicly, the company has touted AI integrations for every facet of its business, including its Bing search engine, 365 CoPilot and potentially its built-in Windows apps.

“Microsoft is at the forefront,” said Cornelio Ash, director and equity analyst at William O’Neil. “They’re definitely leading on the enterprise side, and among the first to roll out enterprise (AI) applications. And Copilot is expected to really change the workforce. So ‘significant’ is an understatement for Microsoft.”

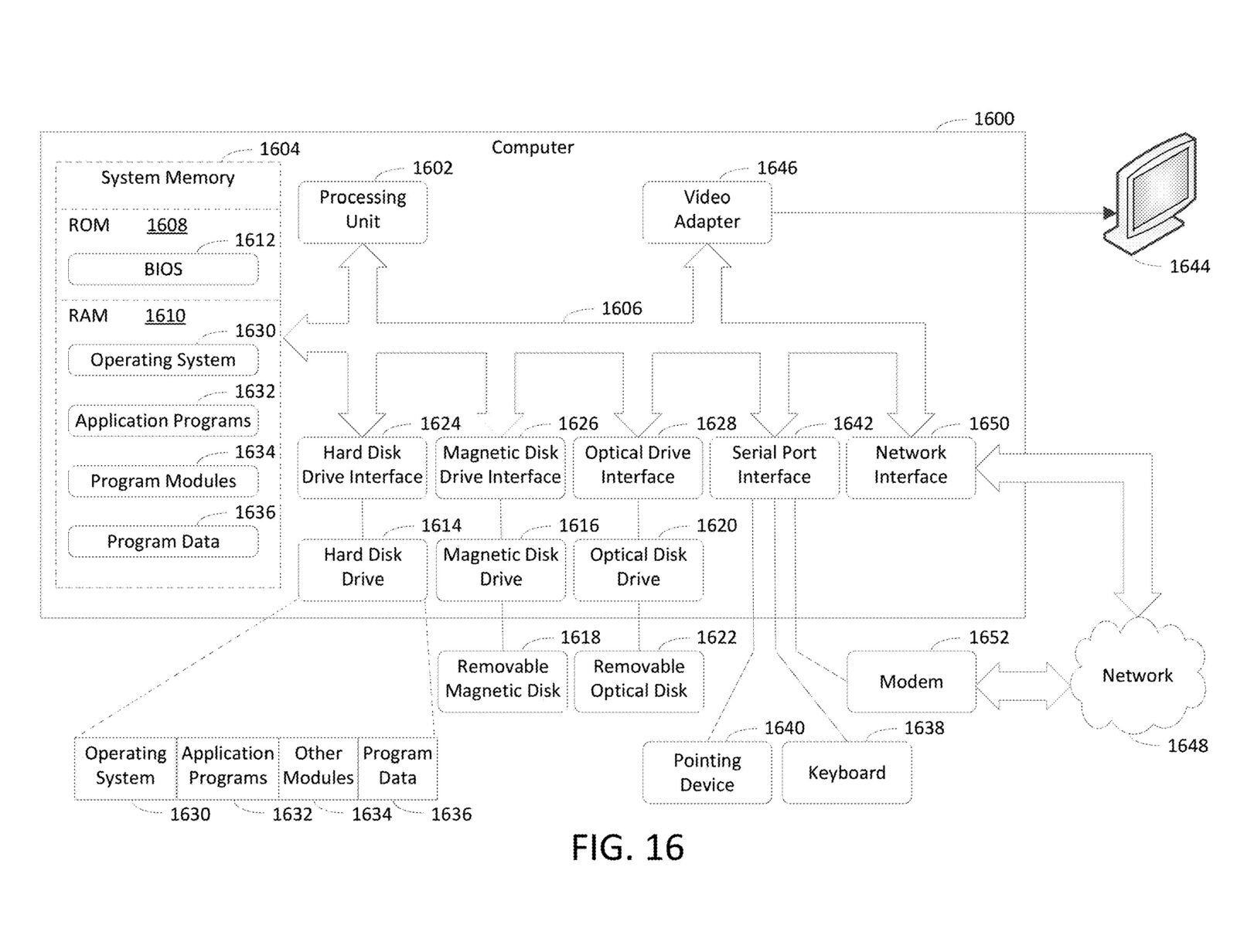

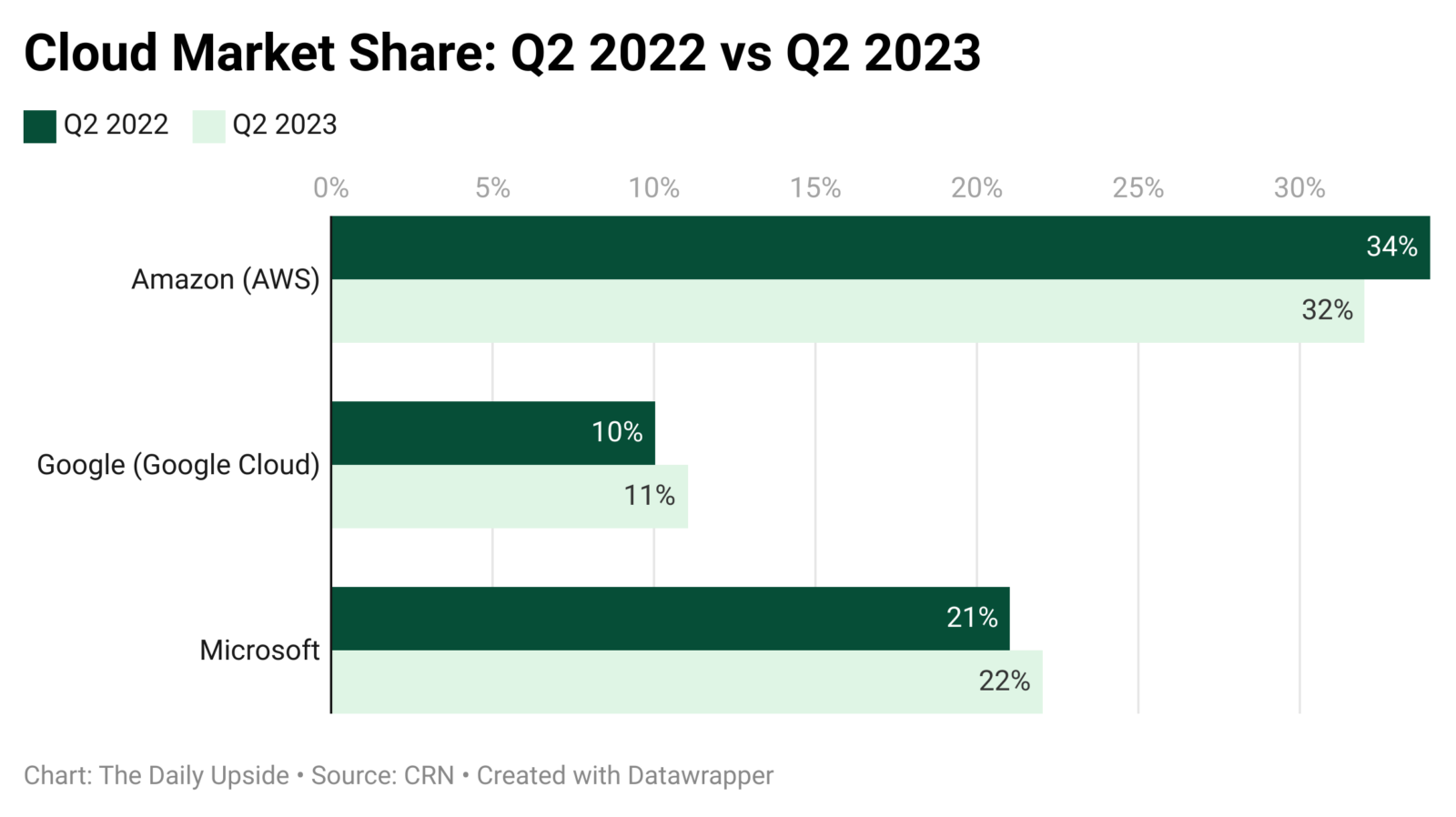

It makes sense that a patent like this is wrapped up into one of Microsoft’s healthiest business units: cloud services. The company’s Azure division held 22% of the global cloud services market share in the second quarter, according to CRN. And given that AI relies on highly scalable and easily accessible computing resources, Azure only stands to benefit from the AI rush.

In its earnings report last week, the company said its Intelligent Cloud segment raked in $24.26 billion in revenue, up 19% year over year. Azure alone, which is part of that segment, saw revenue jump 29%. CEO Satya Nadella said in the report that Microsoft is “rapidly infusing AI across every layer of the tech stack and for every role and business process.”

While it certainly isn’t hurting Microsoft’s bottom line, beefing up its AI cloud offerings (and protection) lines up precisely with the moves of competitors like AWS and Google Cloud.

Plus, security seems to be a specialty of Microsoft’s, said Ash. The company is “by far the largest in terms of generating revenue in cybersecurity,” he said. Microsoft generates roughly $20 billion from cybersecurity efforts annually beating out “pure play” companies like Crowdstrike, Palo Alto Networks or Check Point.

While the systems laid out in this patent could easily fit right into Azure, if patented, the company could also license it to other cloud providers that want to make AI integral to their business without putting data or models at risk.

Despite the hype, AI adoption at scale is still in its infancy, said Ash. Cybersecurity slip-ups related to AI could compromise the position that Microsoft – or any big AI tech firm – currently holds. “As great as this technology is, there’s still a level of hesitancy,” said Ash. “In my opinion, it would be detrimental if one of the big companies had a material setback in that area. It would harm the entire mood.”