Meta’s “Skin Vibration” Authenticator Gets Up-Close and Personal to Combat Deepfakes

Offering up more in-depth personal biometrics may not be a cure-all to cybersecurity woes.

Sign up to uncover the latest in emerging technology.

Meta doesn’t want to just hear your voice. It may want to feel it, too.

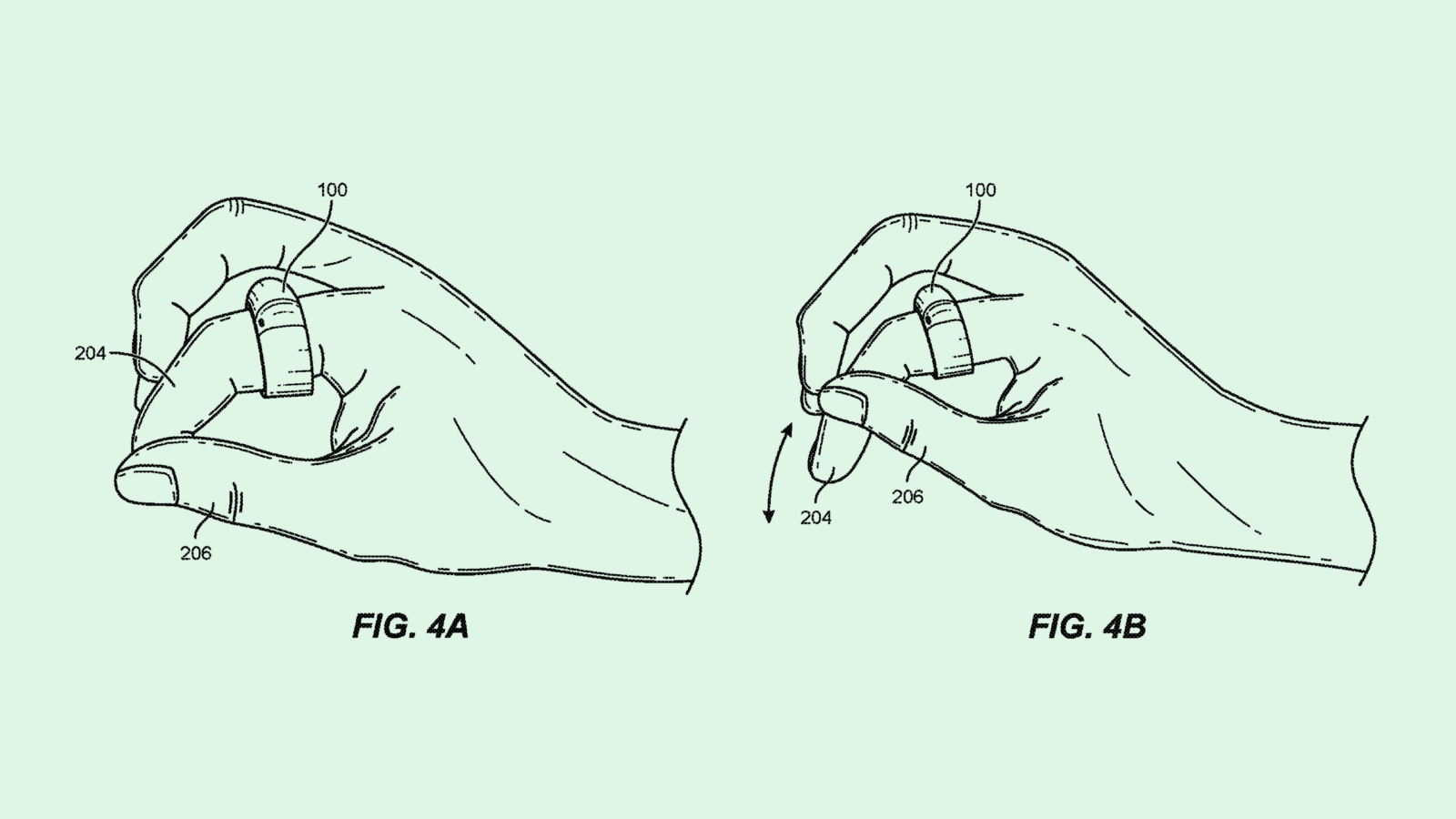

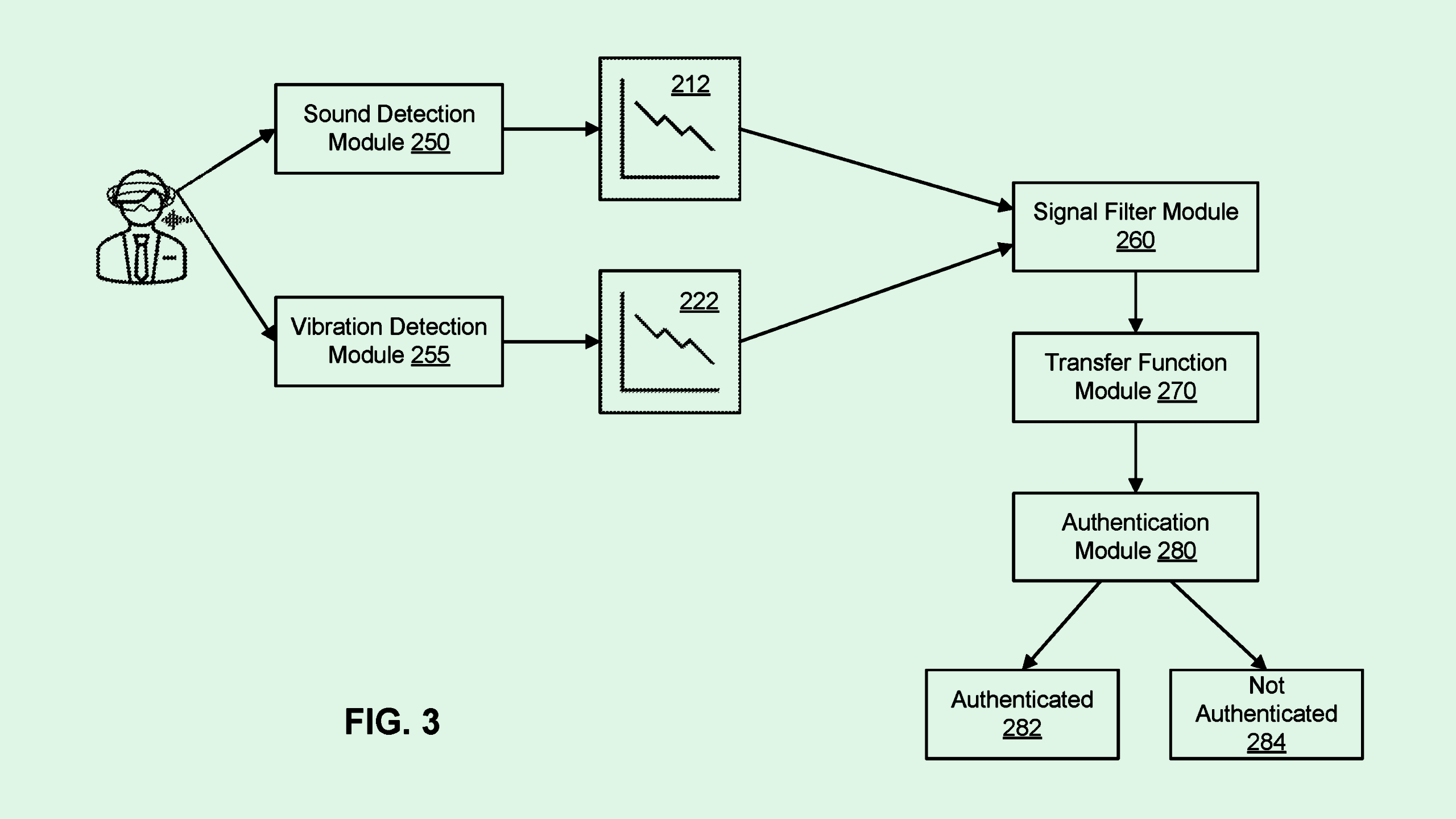

The company filed a patent application for user authentication using a “combination of vocalization and skin vibration.” Meta’s tech essentially uses additional biometrics — namely the “vibration of tissue” caused by speaking — in tandem with a voice to authenticate users.

“There is a security concern with voice-only activation authentication systems because one can easily hack another’s voice either through computer generation or by impersonating,” Meta said.

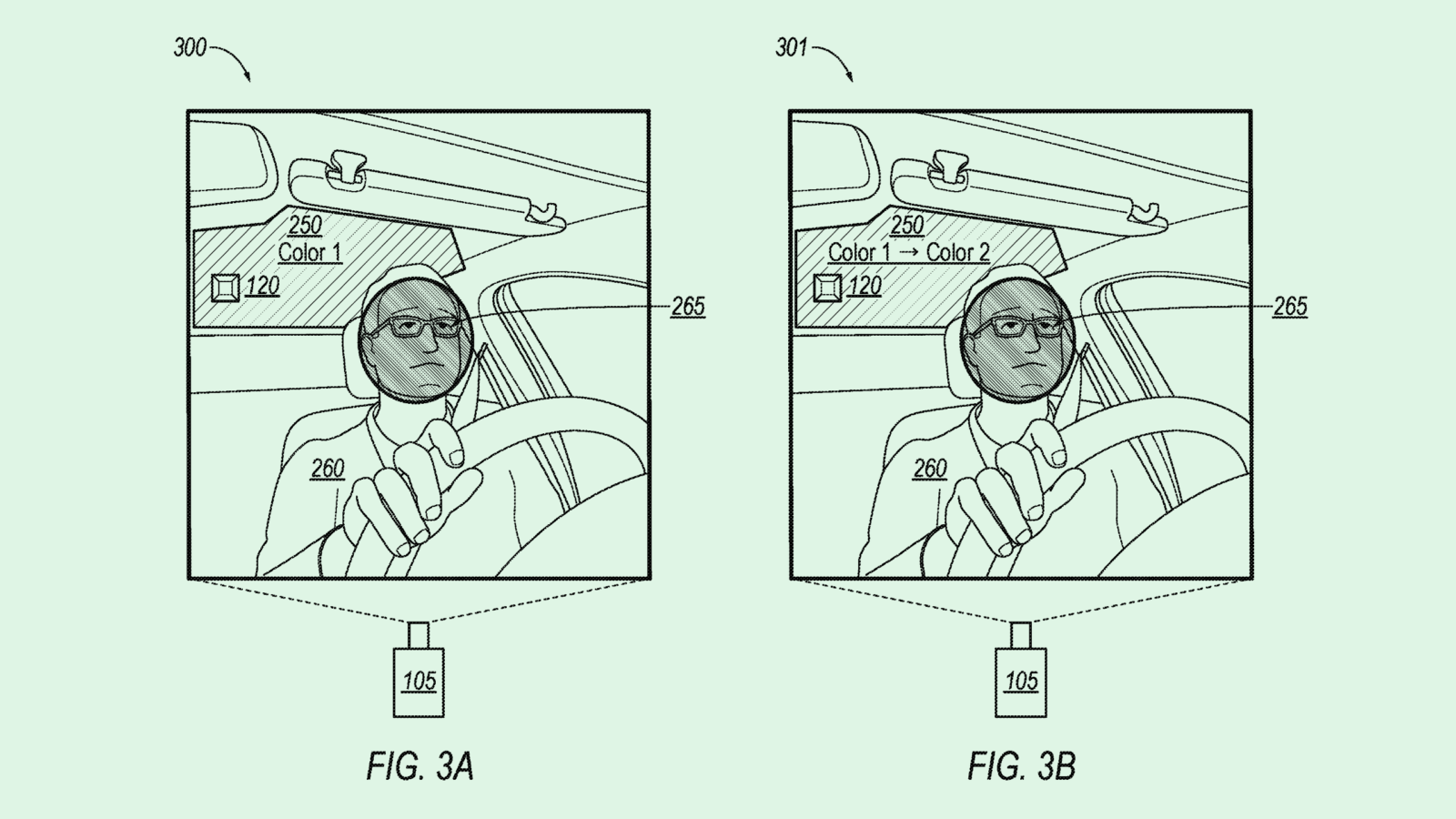

Meta’s tech aims to solve that by using the biological signals that are created as a result of speaking to make sure that the person seeking access to its systems — which, in Meta’s examples, is a mixed reality headset or pair of smart glasses — is actually authorized to do so.

As the title of this patent suggests, when a user says a wake word, skin vibrations are picked up via a “vibration measurement assembly” built into the headset. The system also detects “airborne acoustic waves” (a.k.a. the sound itself) that correspond specifically to the user’s voice.

These measurements are combined to create an “authentication dataset” unique to the user; the vibrations act as a fingerprint to how they say a specific wake phrase. This process cuts out the need for additional authentication steps, such as passwords or fingerprint scanning, thereby improving user experience, Meta noted. “Additionally, a user’s voice can be authenticated periodically, improving device security.”

The tech in this filing is meant specifically to protect its augmented reality headsets, which may be beneficial when considering the amount of personal data these devices can collect. But this isn’t the first time we’ve seen Meta seek to patent voice-authentication tech.

The company previously filed an application for “user identification with voice prints” for social media, which adds user voiceprints as a part of the two-factor authentication process. These patents highlight the growing trend of seamless passwordless authentication, forgoing a typical password for something more difficult to breach, such as biometrics.

But the rise of generative AI has given bad actors a far bigger toolset to breach cybersecurity defenses. Though biometrics are generally harder to breach than a traditional password, deepfakes and voice impersonation technology stand to make it much easier, said Rahul Sood, chief product officer at identity security company Pindrop.

“Any authentication that relies on a human seeing an image/document or listening to a voice is at risk with deepfakes,” Sood said in an email. “Deepfakes break trust in all remote interactions.”

Meta’s tech could overcome this, however, by taking into consideration the vibrations created through talking as a second factor. But offering up more in-depth personal biometrics may not be a cure-all to cybersecurity woes, said Theresa Payton, CEO and chief advisor at cybersecurity consultancy Fortalice Solutions.

Biometric data is deeply personal, Payton said, and given the way that user data is treated as it stands, giving that data over for the sake of security presents its own risks. If that data is subject to a security breach, it’s not exactly easy to erase or replace, she said.

“Once those are in the wrong hands, how do I prove I’m me and the [bad actor] is not me, if they’re able to present the biometrics?” Payton said.