DeepMind Looks at Ways to Make AI Training Less of a Power Drain

Google’s AI arm wants to save energy when building its models – something needed to keep AI development on track.

Sign up to uncover the latest in emerging technology.

DeepMind wants to trim the fat.

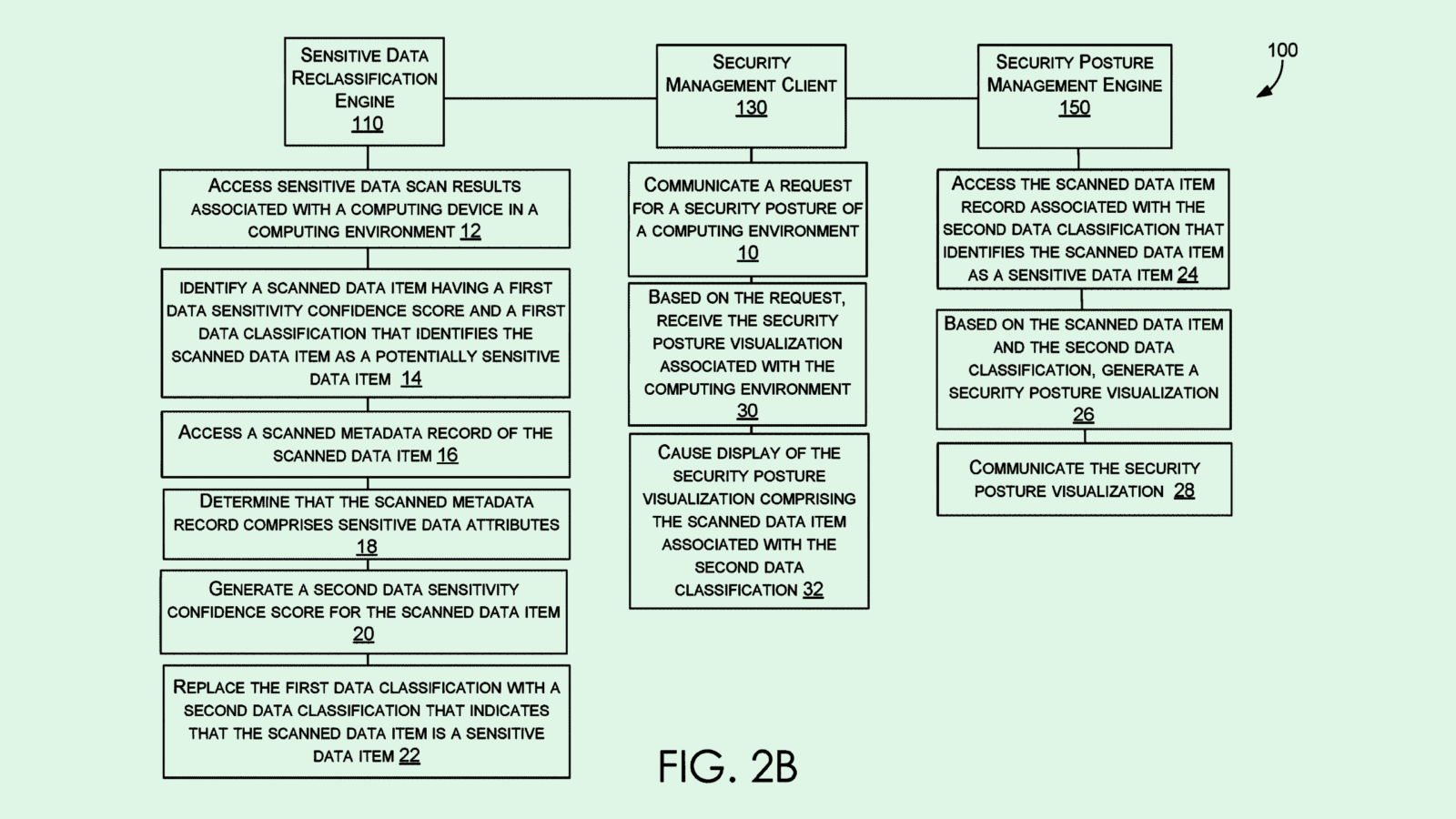

Google’s AI arm filed a patent application in recent months for a system to allocate computing resources “between model size and training data” during the AI training process. Essentially, DeepMind’s tool helps developers budget both power and training resources.

Here’s how it works: First, this system is given a “compute budget,” or the amount of resources a user is willing to blow through on training an AI model to do a certain task. Then the system goes through a process called “allocation mapping,” which essentially allocates certain resources to different assignments in training the model. The filing notes that the mapping takes into account different trial allocations by measuring the predicted performance of the model under different conditions.

As a result, this system spits out two key pieces of information: how big your model can be under the given budget and how much training data you need to make it happen. The target model size that this system gives could guide decisions such as how many parameters are used, or the number of layers or neurons to build into a neural network.

DeepMind’s method wraps up by “instantiating” the machine learning model based on the system’s parameters, which just tests how well it works.

The company noted that this system is predicted to “optimize (the) performance of the machine learning model on the machine learning task” as it only takes up the resources for training that it precisely needs.

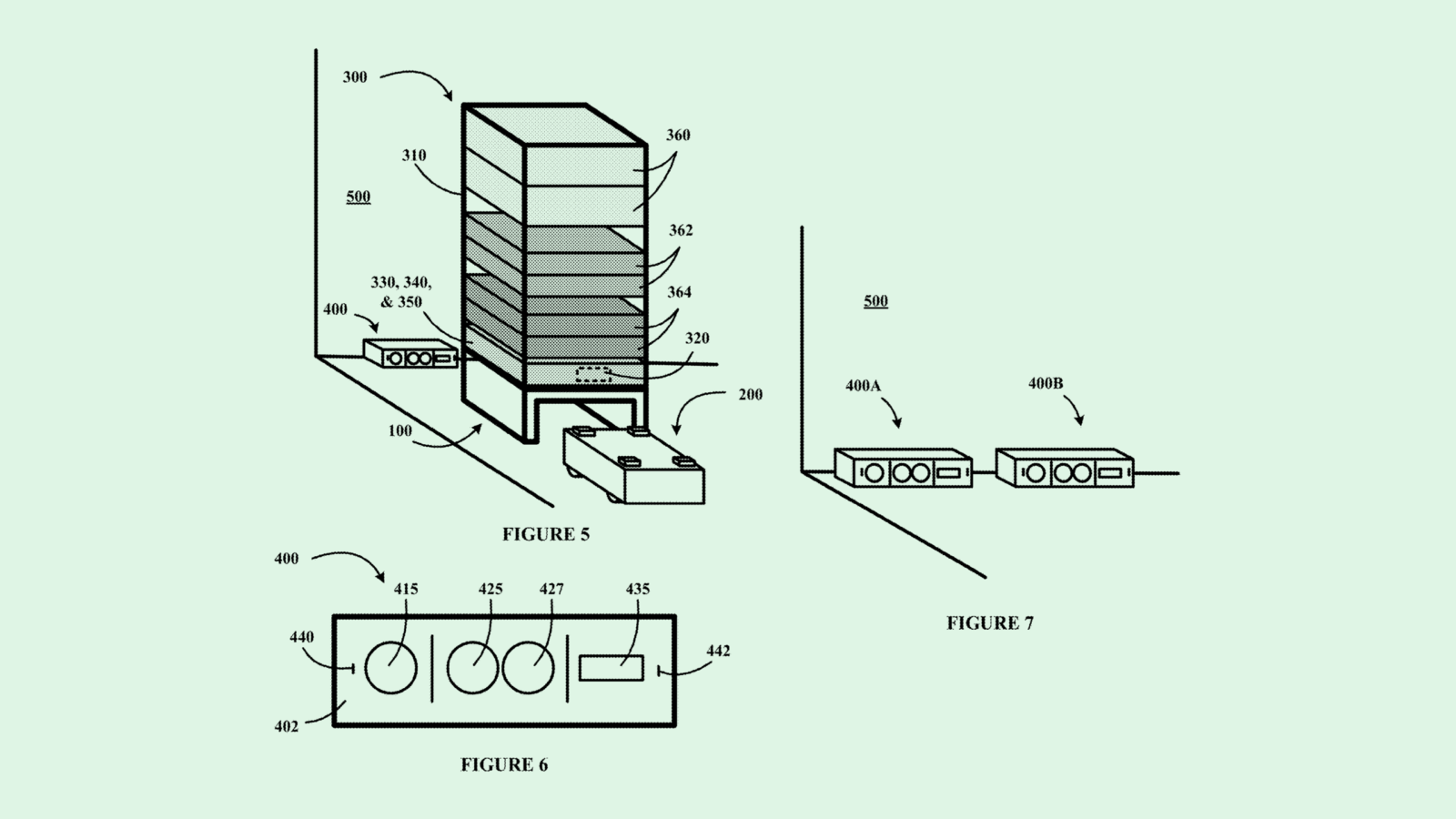

Tech firms like Microsoft and Intel have been looking for an answer to the AI energy-hog problem. DeepMind parent Google has also been searching, seeking to patent a diffusion model with “improved accuracy and reduced consumption of computational resources.”

But according to analysis by Alex de Vries, PhD candidate at the VU Amsterdam School of Business and Economics, published in energy journal Joule this week, servers used in AI development could burn through between 85 and 134 terawatt hours a year by 2027, or 0.5% of global annual energy usage and on par with that of several countries. AI data centers are burning through so much energy that utilities companies are going back on green energy pledges to meet the need.

Google has spent a lot of energy — both literally and figuratively — to be amongst the top players in AI. The company is filing AI-related patents constantly, has integrated AI throughout its Workspace suite, dumped funding into startups like Anthropic and Hugging Face, and partnered with chip giant Nvidia.

But building at that scale comes at a cost. De Vries’ analysis found that “the worst-case scenario suggests Google’s AI alone could consume as much electricity as a country such as Ireland (29.3 TWh per year).”

While this tech wouldn’t slow down the pace of Google or DeepMind’s AI development, it would certainly help cut down excess burn, said Vinod Iyengar, head of product at AI training engine ThirdAI. This system could be particularly helpful for large organizations, like Google, that are simultaneously building and training lots of models at once.

“When you have a lot of people running a lot of workloads, being smart about how you allocate those workloads can actually lead to a good amount of efficiency,” said Iyengar.

But at the end of the day, tools like these just put a Band-aid on a bullet wound, said Iyengar. The real problem with AI development is the GPUs that are used to do it, which suck up “an order of magnitude” more energy than other hardware, like CPUs, he said.

Iyengar compared it to driving a Humvee versus a normal car: “You can be optimal about driving it, but it’s still a Humvee. That’s part of the challenge with the current ecosystem. It’s heavily dependent on computing-inefficient techniques, which are all GPU-centric. But right now, it’s one size fits all.”