Google’s Patent Could Sniff Out Fake News on Social Media

Google may use machine learning to track down misinformation on social media platforms with its latest patent to find “information operations campaigns.”

Sign up to uncover the latest in emerging technology.

Google wants to use machine learning to separate fact from fiction.

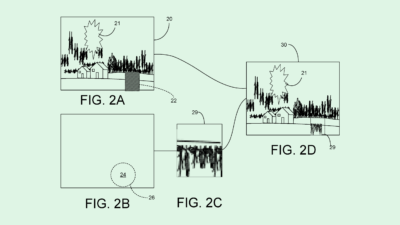

The company filed a patent application for an AI-based system that detects “information operations campaigns on social media.” Google’s system relies on neural network language models to track and predict whether or not text within social media posts contains misinformation.

“(Information operations) activity has flourished on social media, in part because IO campaigns can be conducted inexpensively, are relatively low risk, have immediate global reach, and can exploit the type of viral amplification incentivized by social media platforms,” Google said.

Google’s system trains a general language model to make predictions using transfer learning, with social media posts as its training dataset. The data is classified as either information operations or benign, and could also be labeled with poster information such as “individual, organization, (or) nation state.” Google also noted that a different prediction model may be trained for different platforms, specifically noting Facebook, LinkedIn and Twitter (now X) in the filing.

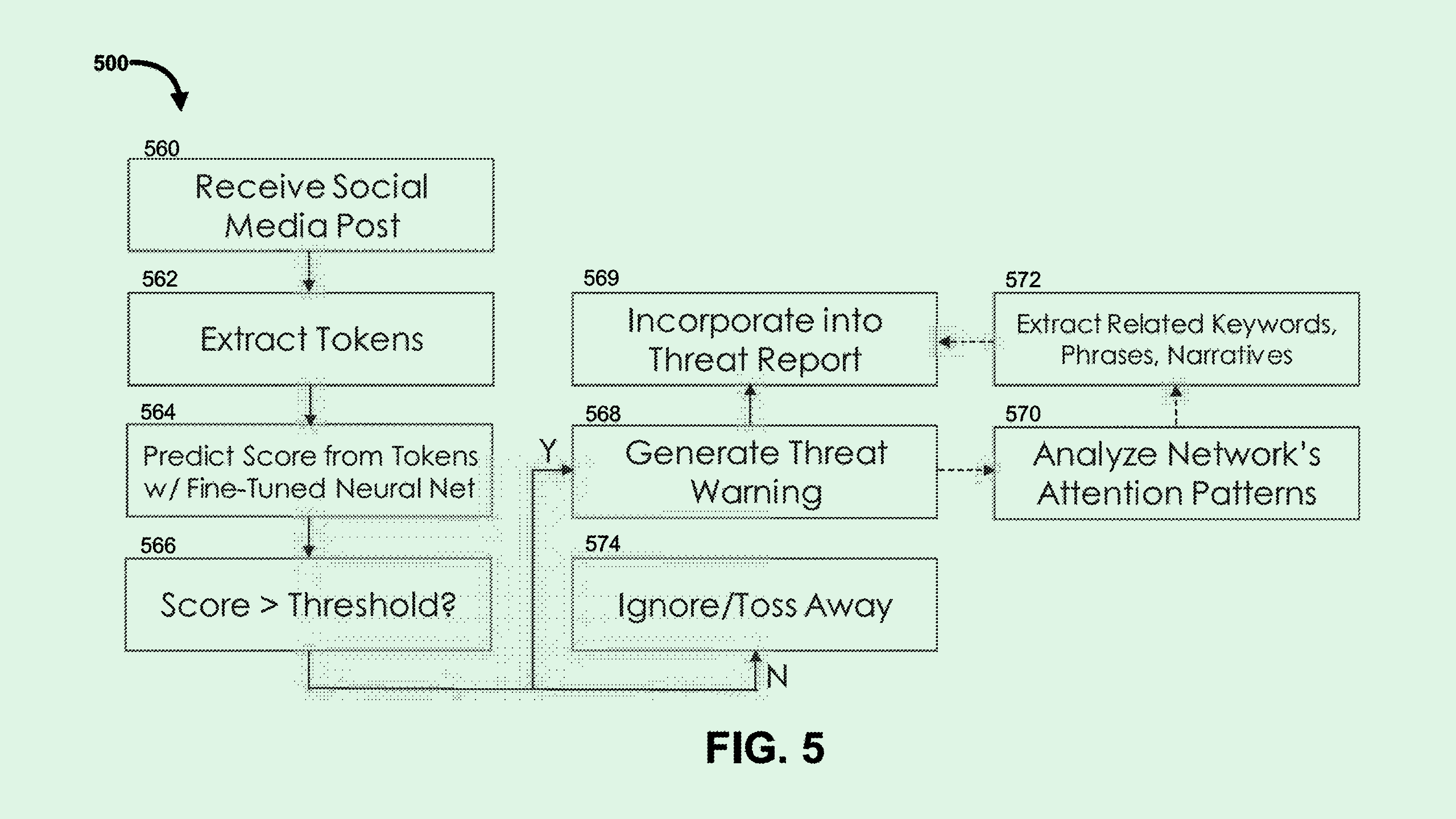

When put to use, this system extracts features of social media posts to feed the prediction model. From this, it generates a prediction score which, if it exceeds a certain threshold, outputs a “threat warning” determining that the social media post represents a disinformation campaign.

The system also generates a “threat report” via a dashboard with information on the posts that surpass the threshold. Google noted that these would be presented to an analyst, such as a cybersecurity or information operations analyst.

One of the main purposes of machine learning is to process and sort large quantities of information. This fact makes it the perfect tool to fight misinformation, said Brian P. Green, director of technology ethics at the Markkula Center for Applied Ethics at Santa Clara University.

This isn’t the first patent aiming to keep track of misinformation: A recent Adobe filing laid out plans for “fact correction” using a machine learning model, and Google sought to patent a system to “protect against exposure” to content that violates a content policy.

“There’s a huge amount of information to keep an eye on, and there’s lots of different sources of it around the internet,” said Green. “(Misinformation) might not be necessarily detectable by just a few humans paying attention. Something that’s a lot more effective is AI watching lots and lots of things … and reacting to it at a higher speed than a human would probably be able.”

But AI that tracks down misinformation has to actively fight against algorithms that promote it, said Green. Social media platforms are designed to promote whatever gets engagement, he noted. A recent study from researchers at the University of Southern California found that the structure of online sharing built into social media platforms has a bigger impact on misinformation being spread than users’ individual political affiliations or lack of critical thinking skills.

Often, he said, the things that spread the fastest are the most salacious. “If the algorithm is optimizing for engagement, or for spreading stories that are really exciting, then that’s not optimized for truth.”

The tech in Google’s patent could potentially be applied to Alphabet-owned YouTube, but as the patent notes, it could also be used to keep an eye on other social media platforms. Because Google’s priority is delivering high-quality search results, Green noted, monitoring other platforms for misinformation could benefit that effort.

Especially with the 2024 presidential election close at hand, consumer-facing tech firms have a responsibility to limit the spread of misinformation, said Green. “It’s a question of whether social media companies and other companies involved in AI and the flow of information are going to step up and actually protect us from, you know, being completely ruined by it,” Green said.