Google’s AI Spam Detector

Google wants to track down spam in all forms it comes.

Sign up to uncover the latest in emerging technology.

Google is searching for new ways to fight spammy query results.

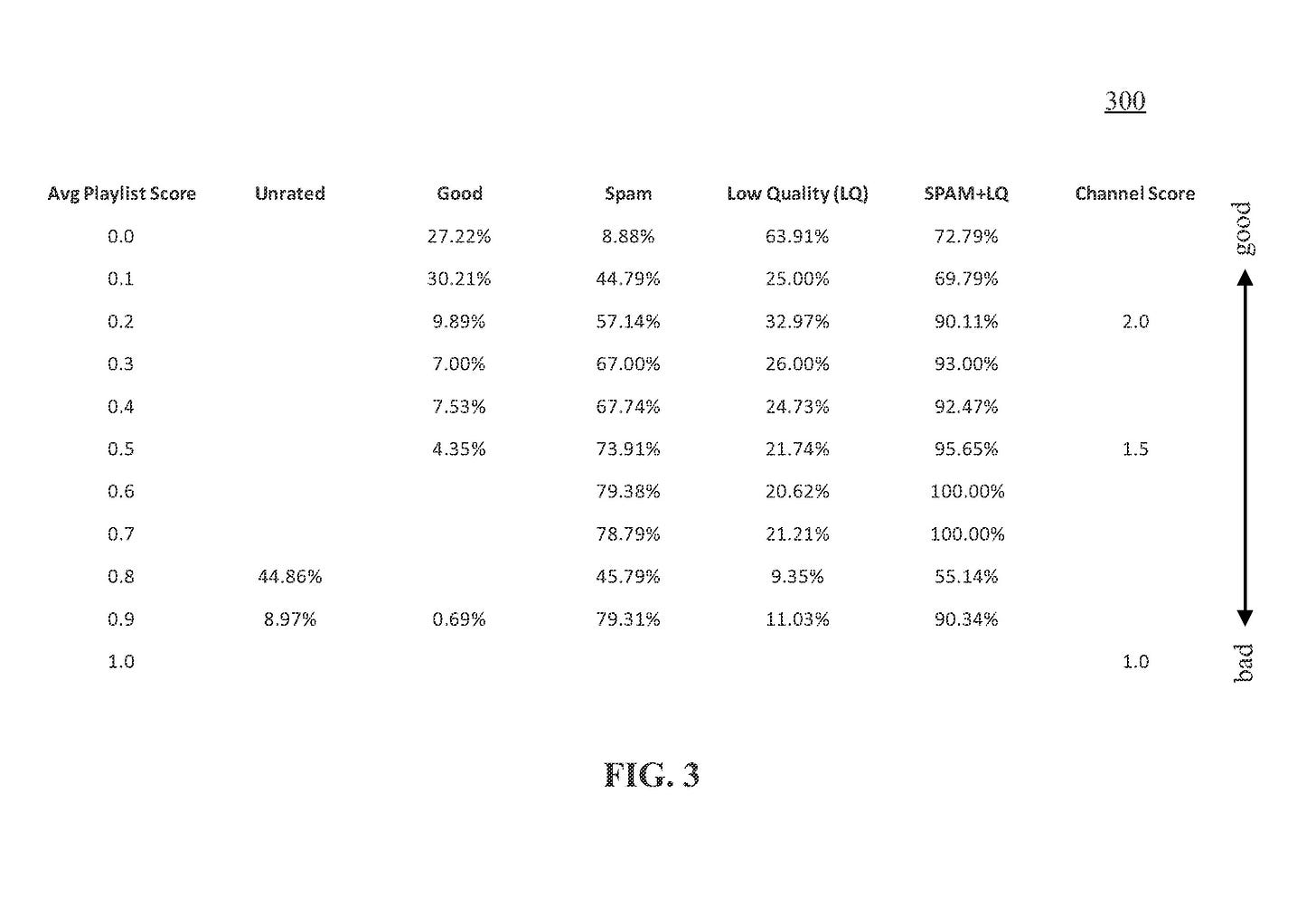

The company is seeking to patent a method for identifying abusive user accounts “based on playlists.” This system, which would likely be built into YouTube, calculates a “playlist score” based on features associated with a user’s playlist. A playlist’s features include its content, view count, “quality score,” and the activity level of the user that created it. This system also calculates a “channel score” based on the user’s average playlist score.

After making these calculations, if the system determines the user is abusive, their playlists are subsequently “demoted,” meaning their rank is lowered in search results and their reach is limited.

Google’s method relies on a “playlist classifier” and a “channel classifier,” or an AI model trained to make these calculations based on information that indicates “bad” and “good” playlists, such as those created by suspended channels versus those with a high “quality score.” The system is also continuously trained to recalculate playlist scores based on “recent trends in keywords and abuse patterns.”

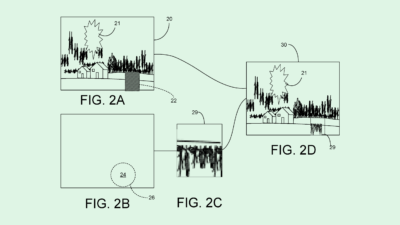

This tech allows Google to filter results to control the reach of “spam playlists” on platforms like YouTube, which can include content that is “unrelated, misleading, repetitive, racy, pornographic, infringing, and/or “clickbait,” but often use popular keywords in their descriptions to gain popularity and perform better in search queries.

“Existing approaches for controlling spam playlists are ineffective due to the sheer number of spam playlists,” Google said in its filing. “Manual user reporting of individual spam playlists simply cannot address the thousands of new spam playlists that are automatically generated by abusive users on their respective user channels each day.”

This patent is the latest of several that show Google’s interest in slowing the spread of low-quality content. The company filed a patent application for a machine learning-based system that essentially predicts how viral a piece of content may go, aiming to create a safety net to catch dangerous content before it goes live. The company also has sought to patent tech that could steer users away from misinformation or potentially dangerous content, which uses machine learning to track and predict user behavior.

Taken together, these patent filings could help the company keep the wrong content from going viral on YouTube and beyond. And using its strengths in AI to combat harmful content this only makes sense, given that the tech can more quickly identify disparate anomalies across the platform than a team of content moderators on their own.

But at the end of the day, all roads lead back to its highly-lucrative ad business. Both Google and YouTube saw slight dips in their ad revenue in the latest quarter amid a slowdown in the sector. Bad content surfacing at the top of search queries likely won’t make its advertising partners happy, potentially leading them to favor rivals like Meta or TikTok. Curtailing the reach of that content is likely a high priority.

Google has faced a number of claims over the years that it hasn’t been so diligent about catching bad content. Both YouTube and the search engine have faced allegations that the platforms have amplified fake news, hate speech, and disinformation. The company also announced last week that it reversed its election integrity policy, which took down content claiming fraud in the 2020 election. YouTube claimed that the policy had the “unintended effect of curtailing political speech without meaningfully reducing the risk of violence or other real-world harm.”

YouTube said that it will “remain vigilant as the election unfolds,” and have more details about its approach for the 2024 election in the coming months. AI-based systems like those in Google’s recent patents may be part of its plan behind the scenes.

Have any comments, tips or suggestions? Drop us a line! Email at admin@patentdrop.xyz or shoot us a DM on Twitter @patentdrop. If you want to get Patent Drop in your inbox, click here to subscribe.