Tech Firms Read the Look on Your Face

Why Sony and Meta both want to track your facial movements in VR, without using a camera.

Sign up to uncover the latest in emerging technology.

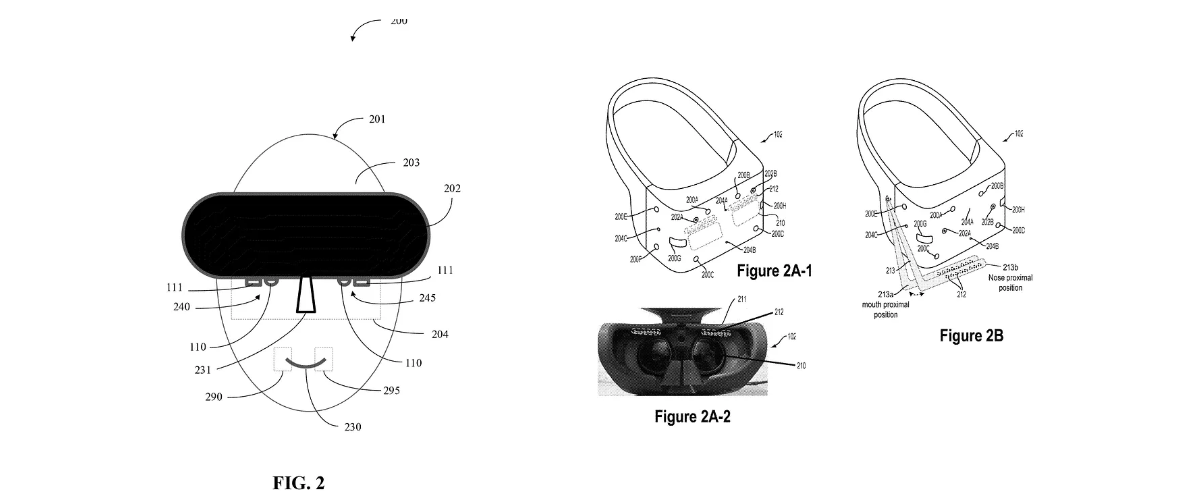

As AR and VR slowly but surely gain traction in gaming, tech firms want to know where your head’s at: Sony and Meta both filed patent applications for tech that tracks facial expressions while in an artificial reality device.

Let’s start with Meta. The company is seeking to patent a method for facial expression tracking that, in some examples, doesn’t require “cameras or complex image data processing.” Instead, Meta’s tech uses an illuminator and a photon detector.

As the name suggests, the headset’s illuminator lights up a user’s face, and the photon detector then picks up the ways in which that light is reflected off of it. The information from these two devices are then sent to a processor, which determines the look on a user’s face using an algorithm that can “reconstruct facial expressions.”

Along with obviating the need for an inward-facing camera system in an AR or VR headset, Meta’s said its tech “provides a low cost, low computational overhead facial recognition system.”

Sony, meanwhile, is working on tracking user facial movements in an artificial reality game using barometric pressure sensors. Using headset mounted sensors, this tech captures “pressure variances” from the motion of a user’s facial features, including eye movements, breathing patterns, blinking or speaking. These variances are then analyzed by aprocessor to detect and evaluate “motion metrics,” or a user’s facial expressions, and are used to “fine tune the user interactions.”

Sony said this kind of tracking helps monitor user engagement metrics with AR and VR games, to “gauge user interest or lack thereof” in the content being shown. Don’t let them catch you yawning.

“Gauging interest level of the users to the content of the different applications will assist the developers and content providers to provide content that is useful and/or interesting to the user,” Sony said in its filing.

As we’ve seen in several editions of Patent Drop, tech companies seem very interested in making tech that can track users’ faces, whether it be to make video calls less awkward with simulated eye contact or make rendering easier for VR headsets.

What Meta and Sony’s patent filings have in common, however, is that they employ sensors rather than cameras to track your facial movement, potentially preserving privacy in a way that camera-based facial tracking methods don’t, Jake Maymar, VP of innovation at The Glimpse Group, told me. Ditching cameras also creates a better financial picture for the companies. Given the surfeit of patents for camera-based methods of expression tracking that already exist, sensors provide a workaround for these companies to get their hands on unique facial tracking tech, he said.

“It makes perfect sense. They’re just trying to figure out the workaround of ‘How do I get facial capture without using cameras?’” Maymar said. “Because cameras have been patented and explored; they’re expensive and also processor intensive. There’s also privacy and security issues with that.”

Sony and Meta are hardly newbies in the extended reality gaming space. Sony has long been an industry leader in console gaming with the PlayStation, and has its own headset offering with PS VR. Meta, of course, has its line of Quest VR and mixed-reality headsets to support its lofty metaverse goals, which supports a handful of games, including Among Us and Beat Saber.

As for facial tracking itself, there are a few potential reasons that Sony and Meta may be interested. In their filings, both companies touch on the unique engagement metrics that can be deciphered from viewing a user’s expression in-game, such as checking their emotional responses to certain content. But another potential use, Maymar said, is how these expressions could enhance the VR experience.

Maymar gave the example of going on an in-game VR quest with friends: “Let’s say you’re in this scary corridor, and you can see your friends’ faces and they can see your face. You can see those expressions — that excitement — not only through gestures, but their actual face. It feels like a bonding moment, because it feels like you’re there.”

At this point, extended reality experiences represent a small fraction of the gaming sector, said Maymar. Part of the reason is because it can be a solitary experience, devoid of connection or emotion. But adding in a way to convey emotions in gameplay, such as through facial expressions, could be a key to growing user interest.

But until that happens, Maymar said, “XR is probably not going to really have a big impact on gaming.”

Have any comments, tips or suggestions? Drop us a line! Email at admin@patentdrop.xyz or shoot us a DM on Twitter @patentdrop. If you want to get Patent Drop in your inbox, click here to subscribe.