Google Wants to Create a Mixed Reality (plus more from Microsoft & Square)

Google’s AR ad play, Microsoft’s meeting sentiment analysis & more

Sign up to uncover the latest in emerging technology.

Google’s AR ad play, Microsoft’s meeting sentiment analysis & more

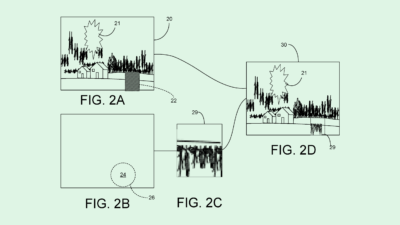

1. Google – Autosuggestions in Augmented Reality Environments

Google is looking at understanding a person’s physical environment and placing relevant virtual objects in a mixed reality view.

To do this, when a user opens up an AR experience on their smartphone or glasses, Google will look for empty spaces in the physical environment. By then looking at objects that are nearby, Google want to be able to deduce the context of the physical space, so that they can then identify relevant virtual objects to fit the environment.

For example, take the above image. In the physical environment, there is empty space on the desk. By seeing that there is a laptop on the desk already, Google could identify objects that would fit the context of the empty space and place those objects in the AR view – e.g. headphones and a notebook.

What’s most interesting about this application is that Google are actively thinking about how their advertising revenue model could extend into an AR world. The patent filing mentions that the virtual object suggestions could be based on a user’s brand interest, and that interacting with a virtual object could enable further interactions such as the ability to purchase an item.

To see another example of a big tech company imagining AR commerce, check out this previous issue of Patent Drop for a description on a PayPal patent.

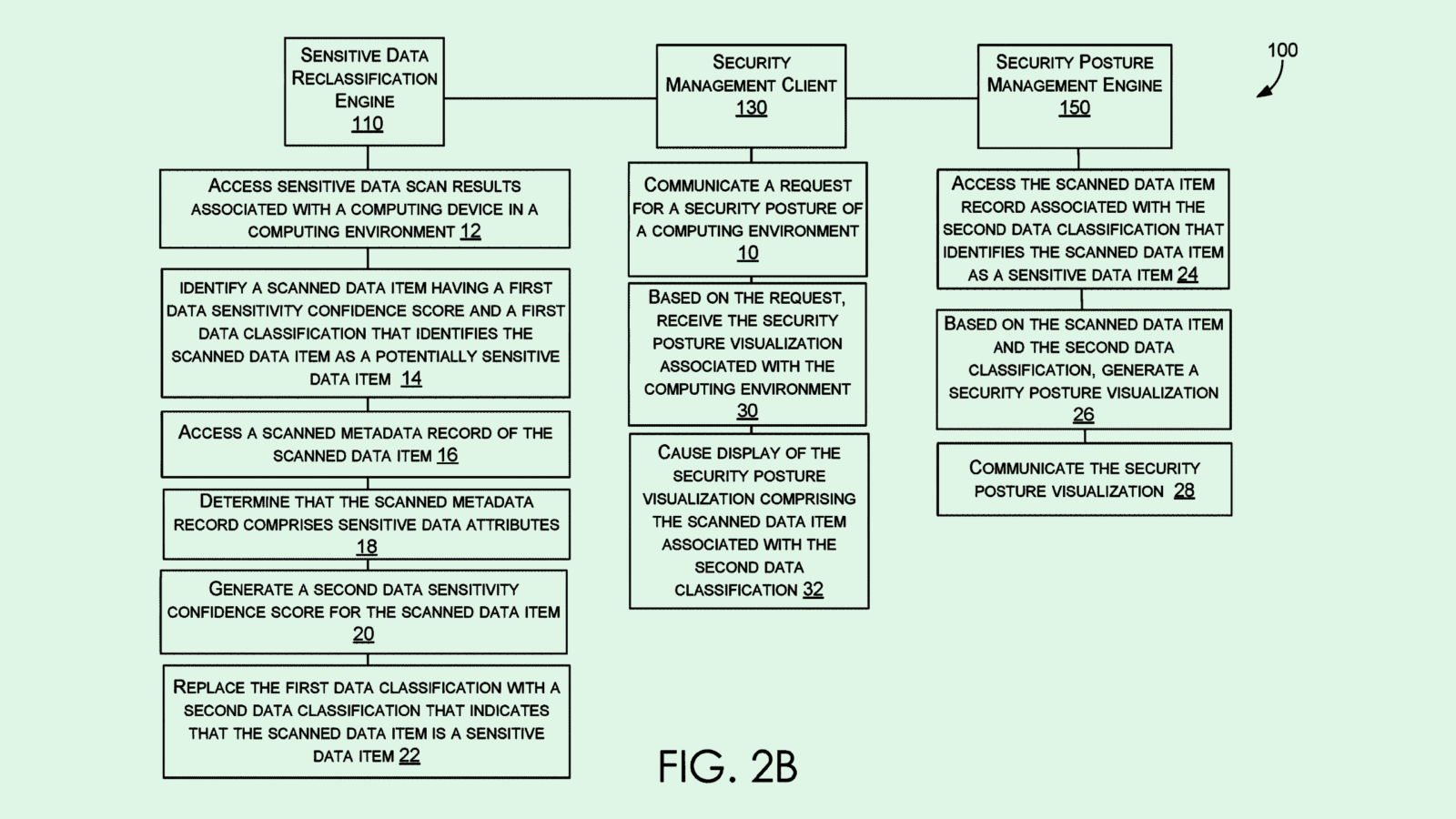

2. Microsoft – Generating a meeting synopsis w/ sentiment analysis

Microsoft are working on generating smart meeting summaries that take into account the sentiment of participants, the sentiment of the topics being discussed, and time stamps for the most relevant parts of the conversation.

To do this, Microsoft would be analysing both the audio and the video feeds of the participants.

For example, to highlight any controversial or contentious topic that might need revisiting, Microsoft’s system could take into account what’s being said, who said what, strength of voice, number of interruptions, raised hands and facial expressions. The filing even describes listening out for non-linguistic expressions, such as yawns, sighs and laughter.

Another use case would be determining the level of consensus (i.e. positive and negative reactions) within a meeting, and what topics drove each type of reaction. For example, if someone makes a statement and then others react negatively (either verbally or non-verbally), Microsoft’s system would take account of this, note the topic, and feed it into a consensus score for the meeting.

Microsoft then describe the possibility of then building organisational dashboards to be able to go back and see what topics drove positive meetings, and which drove negative meetings. One can imagine this being extremely useful in sales meetings, where sales teams can review what topics, or which aspects of a product demo, caused confusion or delight among potential buyers.

This filing seems to be an elaboration of a Microsoft filing I wrote about in #003 PATENT DROP where they were looking into summarise the topics being discussed on video meetings.

In this filing, Microsoft are now looking to go deeper into tracking the verbal and non-verbal signals of sentiment to get richer insights from meetings. With Microsoft’s AI prowess, Teams is becoming an increasingly differentiated offering to Zoom.

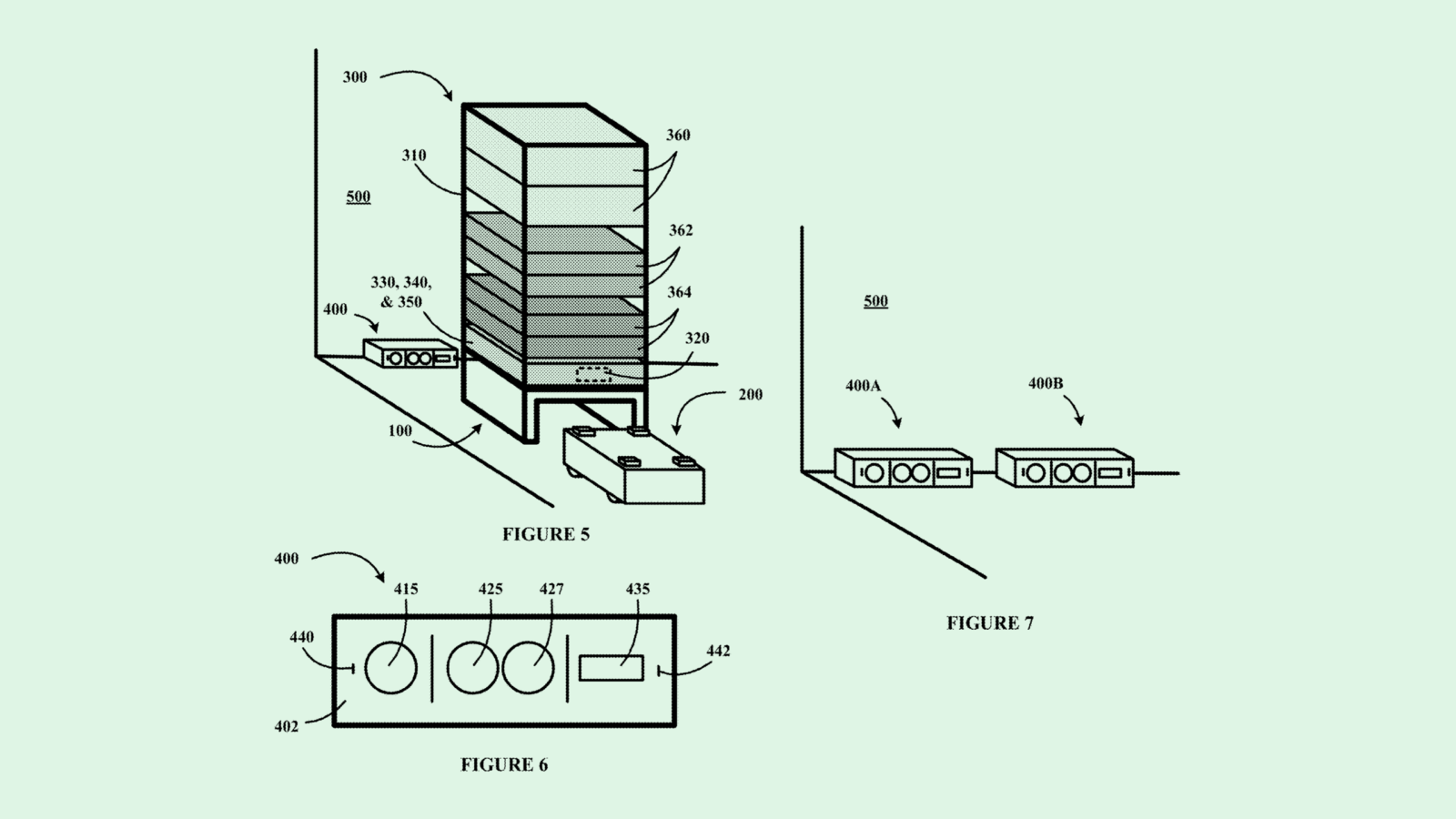

3. Square – Location based transaction completion

Last week, Square updated a patent filing that they hadn’t touched in 6 years.

Square are working on making the ordering and bill-payment process faster and more efficient at restaurants.

When someone has finished eating their food, Square describe the customer being able to open up their phone, request a bill through an app, and then automatically receive the correct bill from the Square merchant device by using the customer’s location information.

Each table would have a wireless beacon that transmits the location information of the buyer. When the buyer requests their bill, their phone will automatically pick up their table number and then submit this information to the merchant device.

With the bill requested, the customer can then make the payment directly through their phone.

Using the same system, Square also describe customers being able to order items directly from their phone without needing to wait for a member of staff to come and serve them.

When the filing was first written in 2014, Square may have been purely thinking about enabling a more seamless payment experience for customers and restaurants. So much time and effort is spent trying to get the attention of a waiter, who then takes time bringing the bill, and then eventually comes to actually take a payment.

I wonder if the filing has come back to life as Square think about how restaurants could operate in a post-Covid world. If restaurants were to implement this system of ordering and receiving bill payments, there’d be less interactions required between members of the public and restaurant staff, reducing the risk of transmission to both parties. Moreover, such a system may enable restaurants to require less staff to serve their customers, thereby helping control costs.