Google’s Tech Could Let AI Skip Training

Google may have found a shortcut to develop chatbots. The tech could prove lucrative in helping enterprises integrate their own AI.

Sign up to uncover the latest in emerging technology.

Google wants to make AI into a jack of all trades.

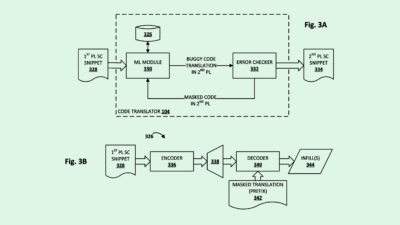

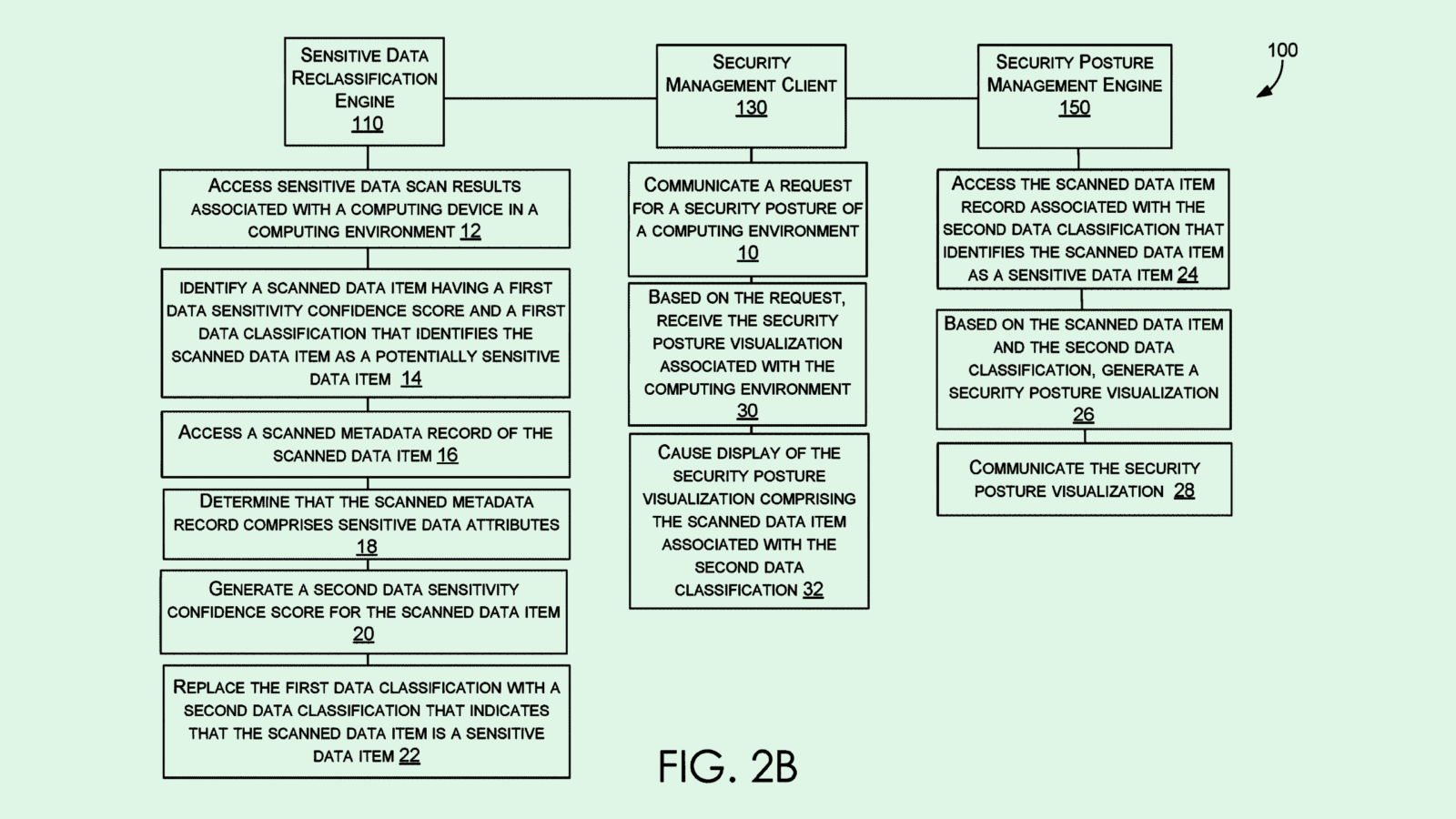

The company filed a patent application for what it calls “parameter efficient prompt tuning” for large-scale models. Basically, this allows developers to use a previously trained AI model for new parameters, without having to retrain it entirely.

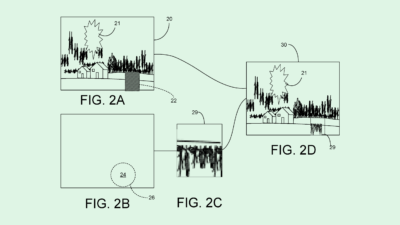

Google’s system first trains a small subset of parameters for a specific task, then fits them into a large, general language model that’s already been trained. During training, the large model’s original parameters are “frozen,” meaning they cannot be altered by new training data. Finally, the model’s specific parameters are trained.

For example, if a developer wants to train a general language model to respond to specific queries relating to a clothing boutique for an ecommerce chatbot, this system might train a small subset of parameters to understand things such as return policies, shipping information, or store hours, and fit those into a general model. The result would essentially be a more flexible chatbot capable of answering a wide array of questions and responding to user queries more naturally.

This approach allows developers to leverage the knowledge an AI model had from previous training, and enables fine-tuning of the model for specific tasks, without having to go through the process of transfer learning (when an AI model’s knowledge is transferred to a different AI model).

“Transfer learning can be difficult for many people to use due to computational resources needed and parallel computing expertise,” Google noted. “Additionally, transfer learning can require a new version of the large pre-trained model for each new task.”

If its patent applications, startup investments, and both consumer and enterprise-grade product developments have made anything clear, it’s that Google wants to be the “It Girl” of AI. But that development comes at a very high cost, both in computational resources and cash. In 2022, Google parent company Alphabet spent more than $30 billion on research and development, with a large chunk of that likely being funneled into AI development.

Previous filings for diffusion models that suck up less resources, as well as a recent filing from Google’s AI arm DeepMind for an AI energy budget planner, prove that the company is looking for ways to save on energy, time and money all at once. And this issue stretches far beyond Google: Both Microsoft and Intel are looking at ways to make AI more efficient, and the impending energy problem that Big Tech’s AI goals are causing is well-documented.

While transfer learning can certainly save time on AI training, doing so still takes up significant energy, as all of the parameters of the new model still need to be trained to some degree.

However, this patent could pave the way to creating a one-size-fits-all general language model without needing to copy, paste and redo the entire thing, as one would with transfer learning.

But beyond internal uses, this tool could prove invaluable to any company that wants a chatbot that answers questions flexibly with minimal training. Marketing this as an easy AI service for less technically inclined enterprises could prove to be lucrative.