PayPal Looks to New Cybersecurity to Build Public Trust

PayPal’s filing could signal that the company is trying to build the same public trust that traditional financial institutions have.

Sign up to uncover the latest in emerging technology.

PayPal wants to know if you can trust who you’re Venmo-ing.

The fintech giant filed a patent application for a “trust score investigation” cybersecurity tool. PayPal describes its methods as “confidence determination tools based on peer-to-peer interactions.”

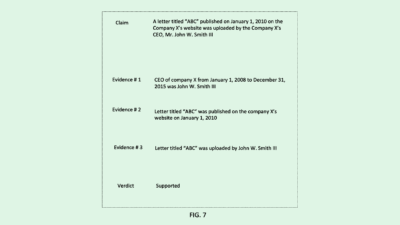

PayPal’s system works essentially as a large-scale sorting mechanism for transactions, with your trustworthiness decided based on where your transactions are sorted. Using what PayPal calls “clustering algorithms,” this system predicts relationships between transactions based on the recipient of the money, the amount sent and the time of the transactions. These predictions guide the system in classifying and clustering certain transactions.

Each transaction within a group is then classified once again based on transaction time and dollar amount, and using these classifications, a confidence score is assigned to the transactions. That score is attached to a user’s account, and recalculated based on further transactions.

This confidence score can be used to make several decisions related to the user, PayPal noted. It can help “indicate the risk in loaning money” to certain users, or guide who a user “may want to or not want to borrow money from.” In peer-to-peer loan scenarios, this score may impact interest rates, affect the amount of credit a user has, or determine the currency that a user can borrow using. (FYI: PayPal doesn’t currently offer peer-to-peer lending, but this could signal an interest from the company.)

PayPal said current tools don’t really exist to help users make judgments about the financial trustworthiness of one another, due to a lack of information or data, leading to judgements being “clouded by emotions, (or) external unrelated factors that cause a bias.” PayPal said in its filing that its system aims to provide “investigative tools to help make better trust judgments based on objective information.”

We’ve seen a number of cybersecurity and fraud detection patents from PayPal attempting to make its systems safer, including user-end tools like voice-based biometric authentication and back-end data storage techniques that aim to obscure personally identifiable information in photos. And with hundreds of millions of customers, the company has a vested interest in fortifying its cybersecurity walls.

A safe platform also is important for gaining the same public trust that traditional financial institutions have, said Chase Norlin, CEO of cybersecurity workforce firm Transmosis. While fintechs like PayPal have grown in popularity over the last few decades, traditional financial institutions like Bank of America, Wells Fargo and JPMorgan Chase have gained trust mostly due to longevity rather than their technological prowess, he noted.

“PayPal is being very savvy about strengthening the structure of their financial trading platform, which in today’s world generates way more trust than … a brand that’s just been around a long time.” Norlin said.

While a system like this could bring up potential privacy concerns, Norlin said that leveraging data to keep users from being defrauded and reduce the risks associated with peer-to-peer money transfers could further instill customer trust. Plus, though not mentioned in the patent, if PayPal implements proper opt-in or opt-out procedures, consumers would have the choice of whether or not to sacrifice privacy for security, he noted.

This also may solve the issue of bias present in many AI-based lending scenarios, he added. With a system like this, its bias depends entirely on the way that PayPal builds and implements it. But if it’s based purely on financial transaction-related data as the patent intends, not taking into account a user’s personal information, it could be “incredibly egalitarian,” he added.

“Bias is a function … of how the system is set up and designed,” said Norlin. “If you’re looking at it from a pure data perspective, then there may be no bias whatsoever.”