Tesla Strengthens AI Vision Models for Self-Driving

The tech could prevent the phantom braking problem which Tesla vehicles are notorious for.

Sign up to uncover the latest in emerging technology.

Tesla wants its cars to have a clearer picture of the road in front of it.

The company wants to patent a vision-based system with “thresholding for object detection.” Tesla’s filing lays out a neural network system that allows for more precision when detecting what’s in front of its cameras.

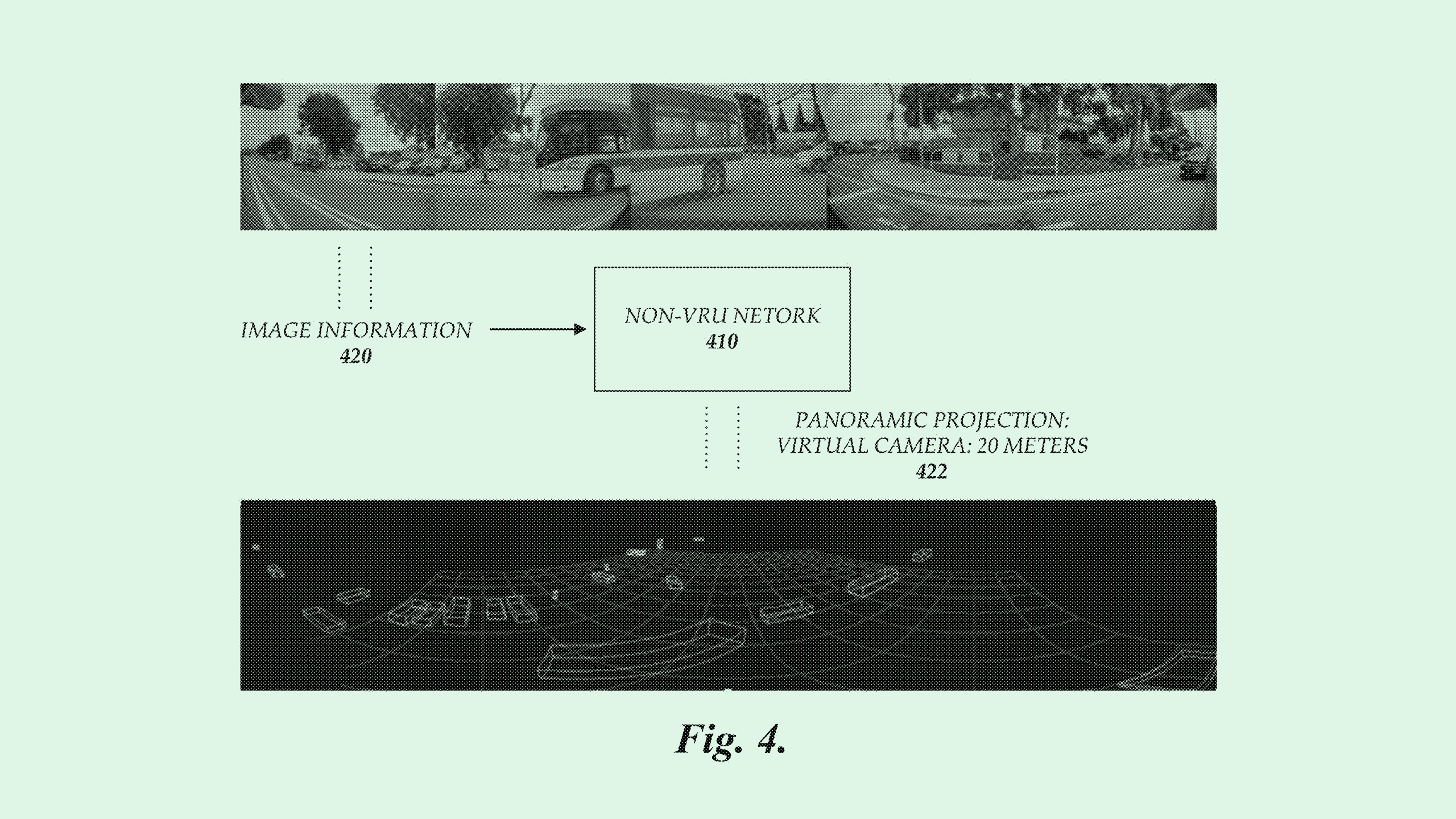

Typically, self-driving cars use a combination of detection systems, such as LIDAR, and AI-based vision systems to make decisions. Vision systems, Tesla said, are usually secondary sources for “confirming the detection of the object.” With this approach, “systems incorporating a combination of detection and vision systems do not require higher degrees of accuracy in the vision system for detection of objects,” the company noted.

Tesla’s system aims to make a more enhanced and accurate vision-based machine learning model that can identify objects and understand characteristics like position, velocity and acceleration of those objects. This system tracks objects over time, and uses “thresholding” to ensure that the objects it tracks are actually, well, real.

“The utilization of thresholding on the output of the machine learning model can reduce errors, such as missing frames of video data, discrepancies in camera data, false positives, false negatives, and so on,” Tesla said.

To put it simply, Tesla’s tech compares objects that it tracks over time (meaning: one’s that it knows are real) to objects that appear in front of its sensors, to determine if they meet the threshold for detection. If the threshold is met, then the object is detected and tracked for use in “downstream processes,” meaning it’s used to further train the machine learning model that makes those determinations.

This prevents the system from detecting and acting on “phantom objects,” or ones that aren’t actually there, caused by things like reflections, smoke, fog or lens flares.

Tesla has long been committed to broader adoption of autonomous vehicles, marketing Full Self-Driving, Autopilot, and PSD Beta as primary features in its EVs. But the company has gotten in trouble with the National Highway and Transportation Security Administration regarding this feature in recent years.

The agency first initiated an investigation into the company’s autopilot feature in 2021 after multiple incidents where Tesla drivers crashed into emergency vehicles. While the probe was about to wrap up in August, regulators raised additional concerns regarding a system that lets drivers use self-driving for extended periods without putting their hands on the wheel.

And this week, Tesla recalled more than 2 million cars – nearly all of its vehicles that were sold in the U.S. – to update software that ensures drivers are paying attention when using Autopilot.

The company has also faced a number of reports regarding “phantom braking.” The NHTSA began a separate investigation into the problem of sudden braking and acceleration after hundreds of reports of the issue from drivers in early 2022. And in May, a whistleblower leaked one hundred gigabytes of confidential Tesla data to German newspaper Handelsblatt, which included thousands of customer complaints regarding random stops and acceleration.

The company started rolling out an over-the-air update, FSD Beta V11.4.6, that aimed to put an end to the issue of phantom braking in the self-driving feature. Because Tesla no longer includes full release notes alongside its updates, it’s unclear if this patent is part of the solution. But if the tech in this filing works as Tesla explains, it could help make its vehicle’s self-driving feature safer.